Flosum Configuration

Now that the Jenkins server is set up for Automation test execution, we can begin setting up the Salesforce org with the Flosum installation to queue the Jenkins job that we just created.

Prior to setting up the webhook to trigger our Automation job on another end system, a pipeline has to be created in Flosum. Before you can initiate a pipeline, you need to have a valid branch in Flosum to run it from. The entire process is detailed in the following steps.

- Registering your Organization

- Creating a Snapshot

- Committing to Branch

- Creating a Webhook

- Creating a Pipeline

Registering your Organization

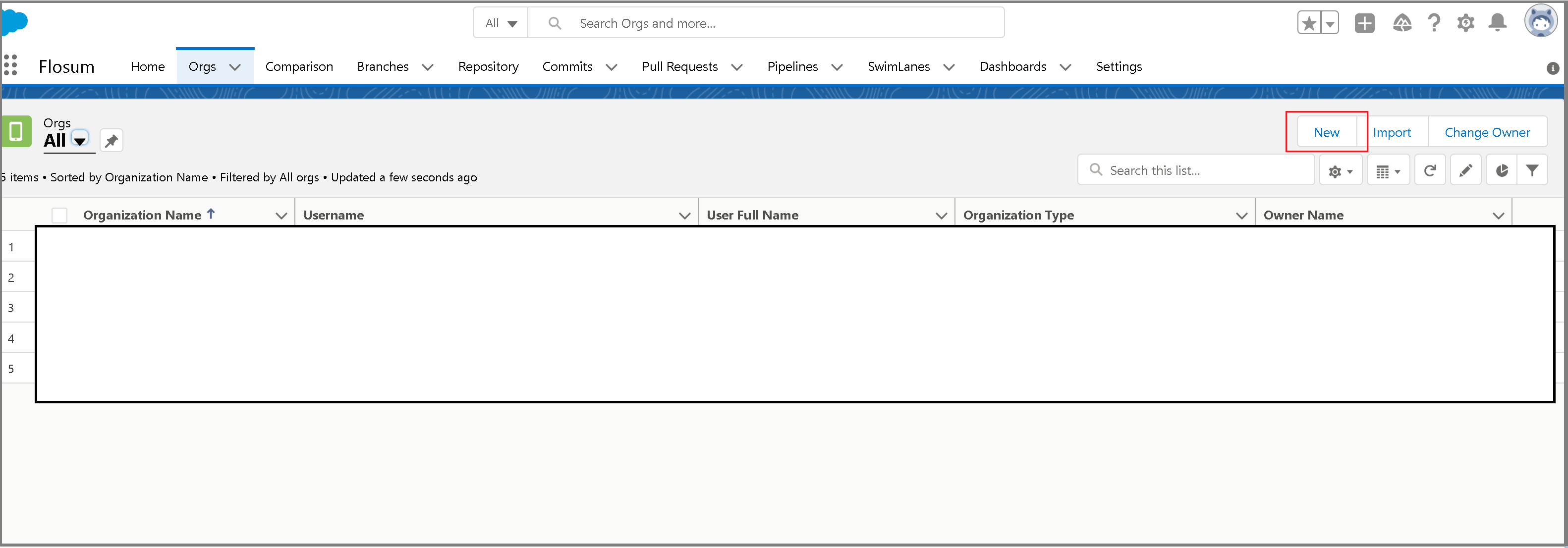

The first step in setting up the pipeline in Flosum is to add an organization. Navigate to the Orgs tab in your Flosum org and click New.

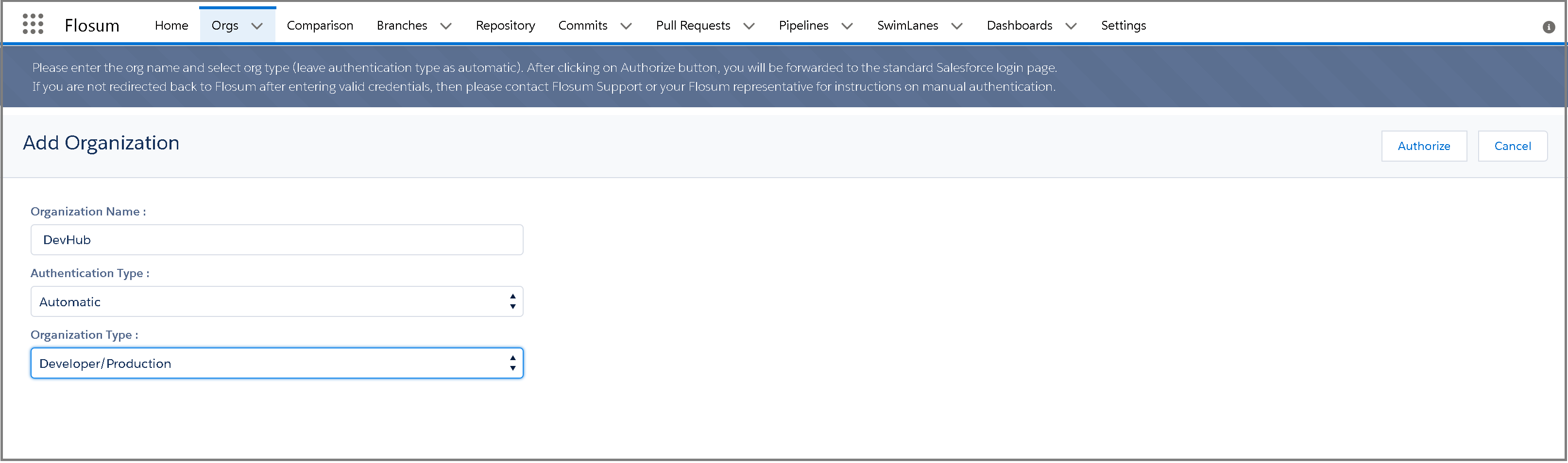

Enter the Organization Name, Authentication Type (automatic is easier), and Organization Type. This needs to be an org that you are connecting to from Flosum to trigger the pipeline. In practice, this would be whatever org you are making deployments/triggering changes on that triggers your Automation tests elsewhere. In other words, if you want to run Automation tests following a deployment/change in a Developer org, your setup might look like the screenshot given below.

Once you enter these field details in, you’ll have to authorize Flosum to connect to this org by clicking Authorize in the top right corner and following the Salesforce authentication there.

Success! Your org has been added to Flosum and we can continue on to the next step of creating a snapshot.

Creating a Snapshot

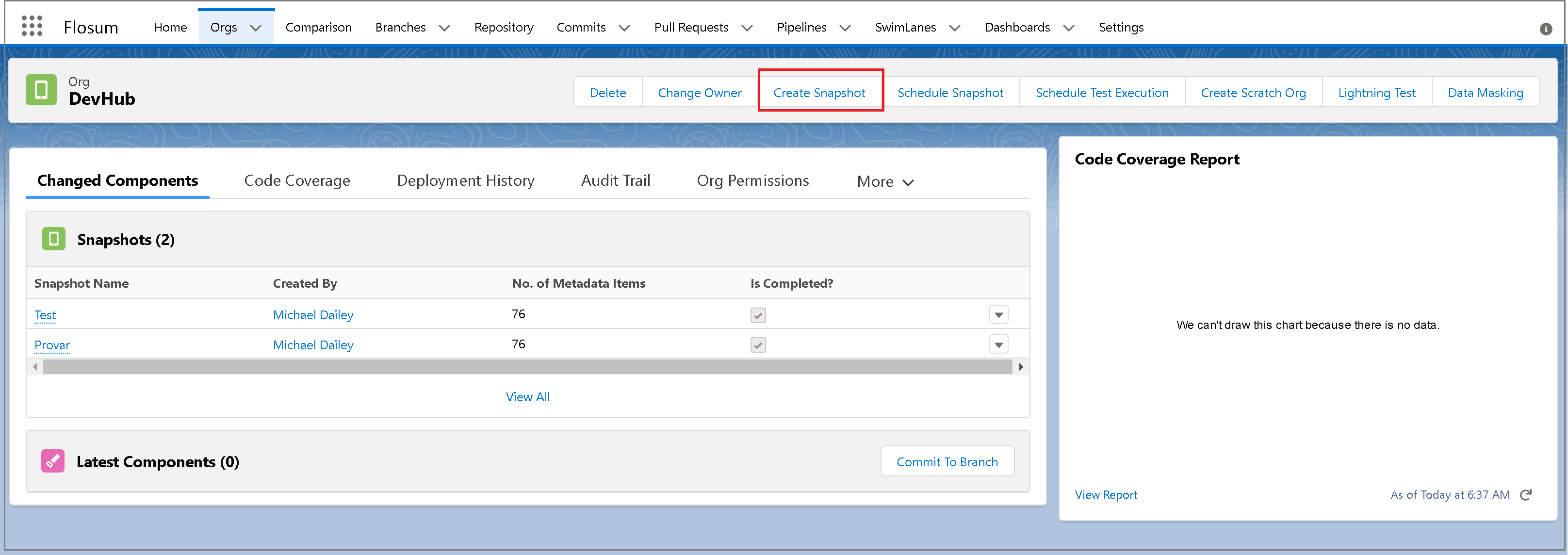

In order to create a snapshot, navigate to the org we just created and select Create Snapshot.

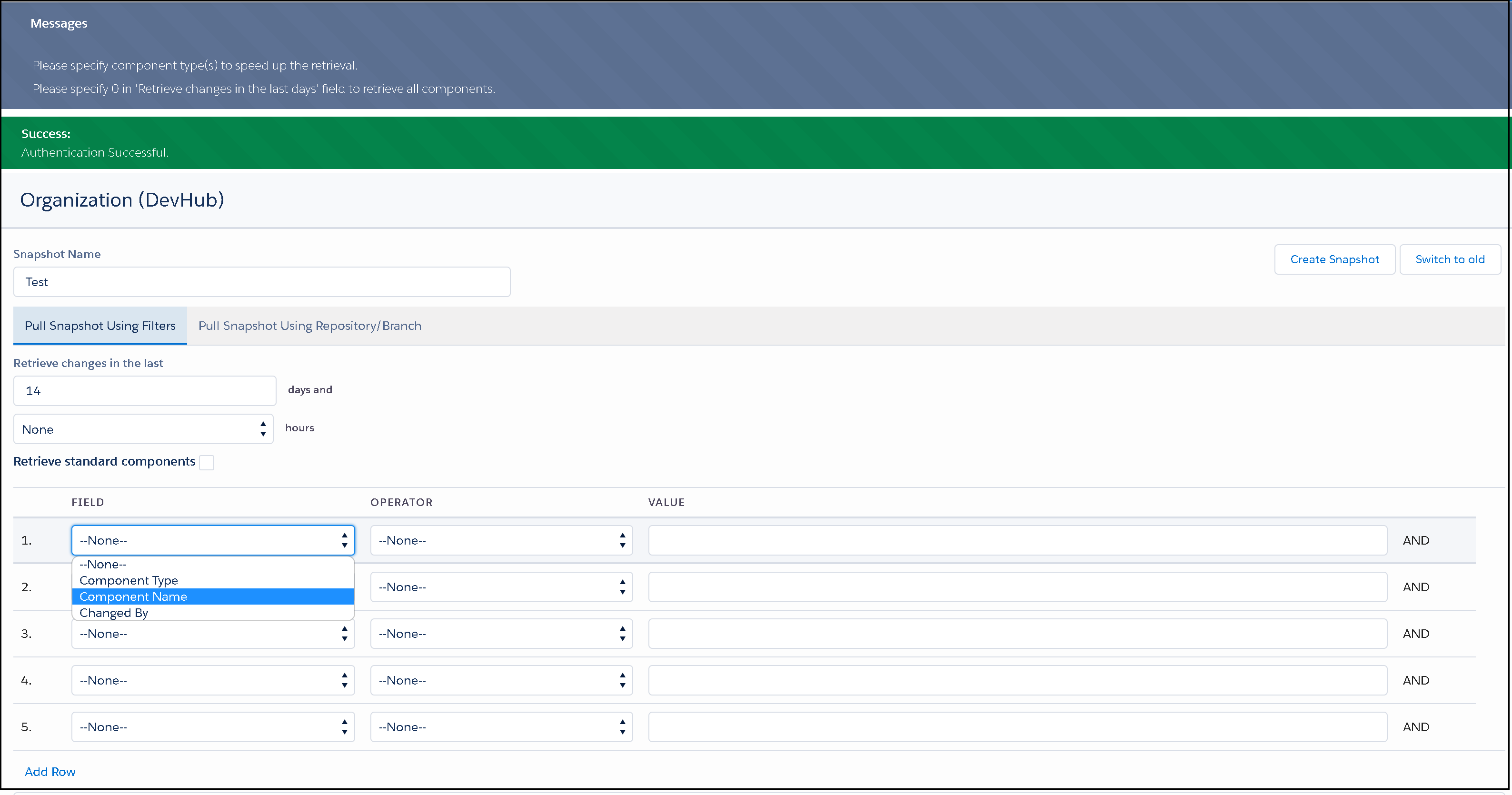

First, you will enter a name for your Snapshot. A snapshot is essentially a specific capture of metadata that you wish to deploy or validate. You can create a partial or complete snapshot. You can configure a lot of options for the snapshot.

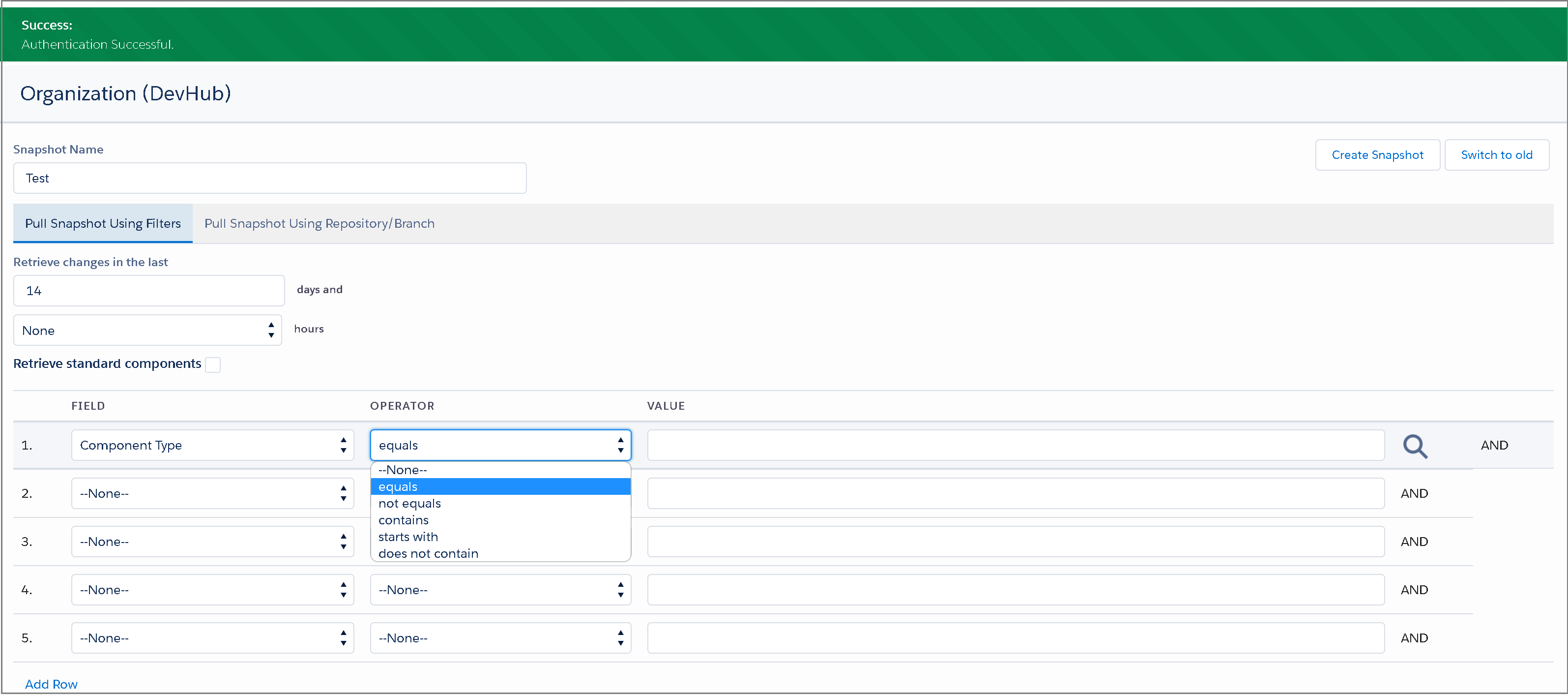

For example, you can select the number of days that you want to retrieve changes for the selected components. You can also filter the components based on component type, component name, or changed by fields.

The operator can be set to a wide variety of options, depending on which components you are trying to filter for.

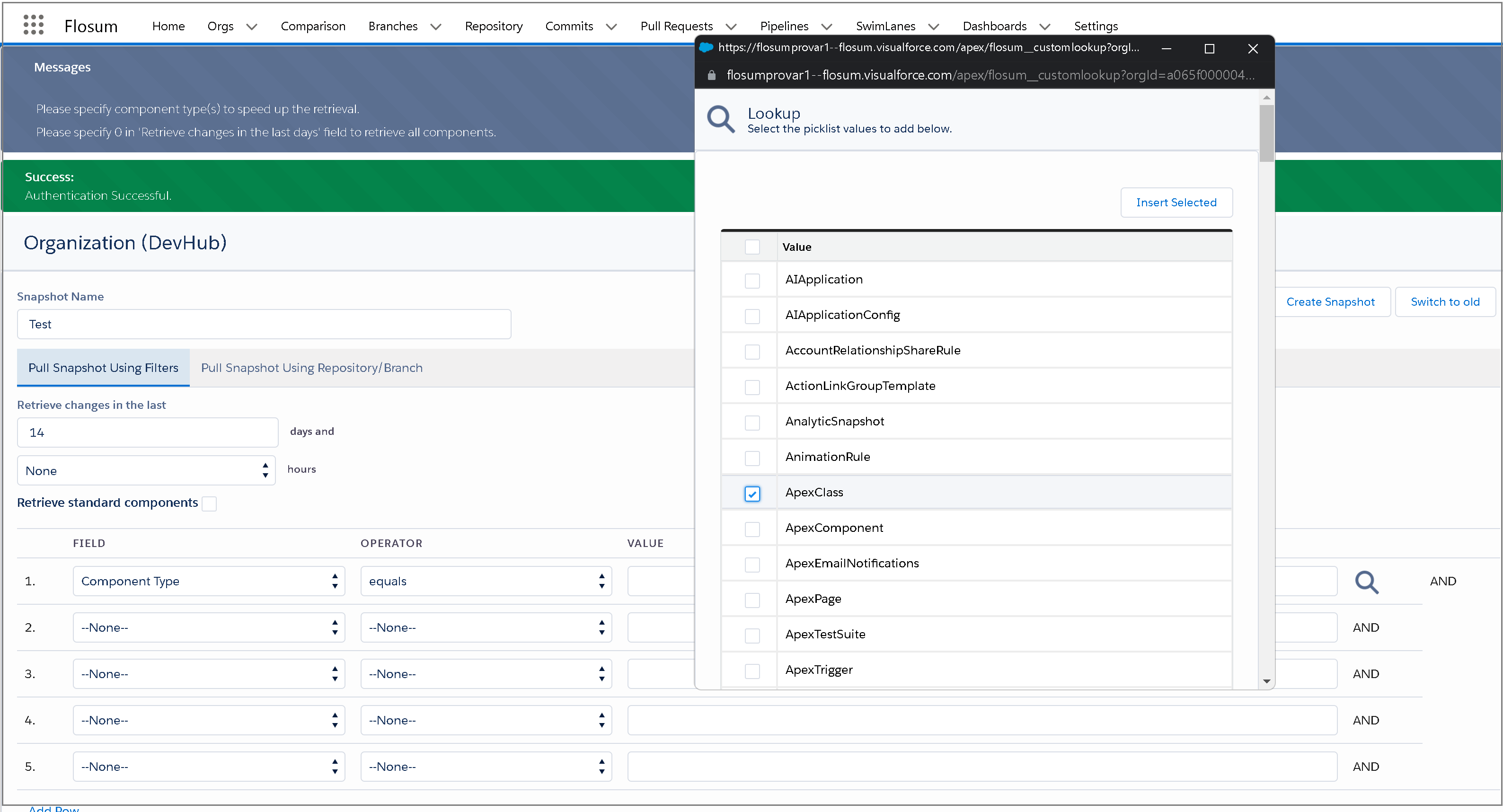

Once you’ve selected a field and an operator, you can use the search icon on the far right to search for specific components by value. You can add each individual component you want by checking the boxes next to the relevant item.

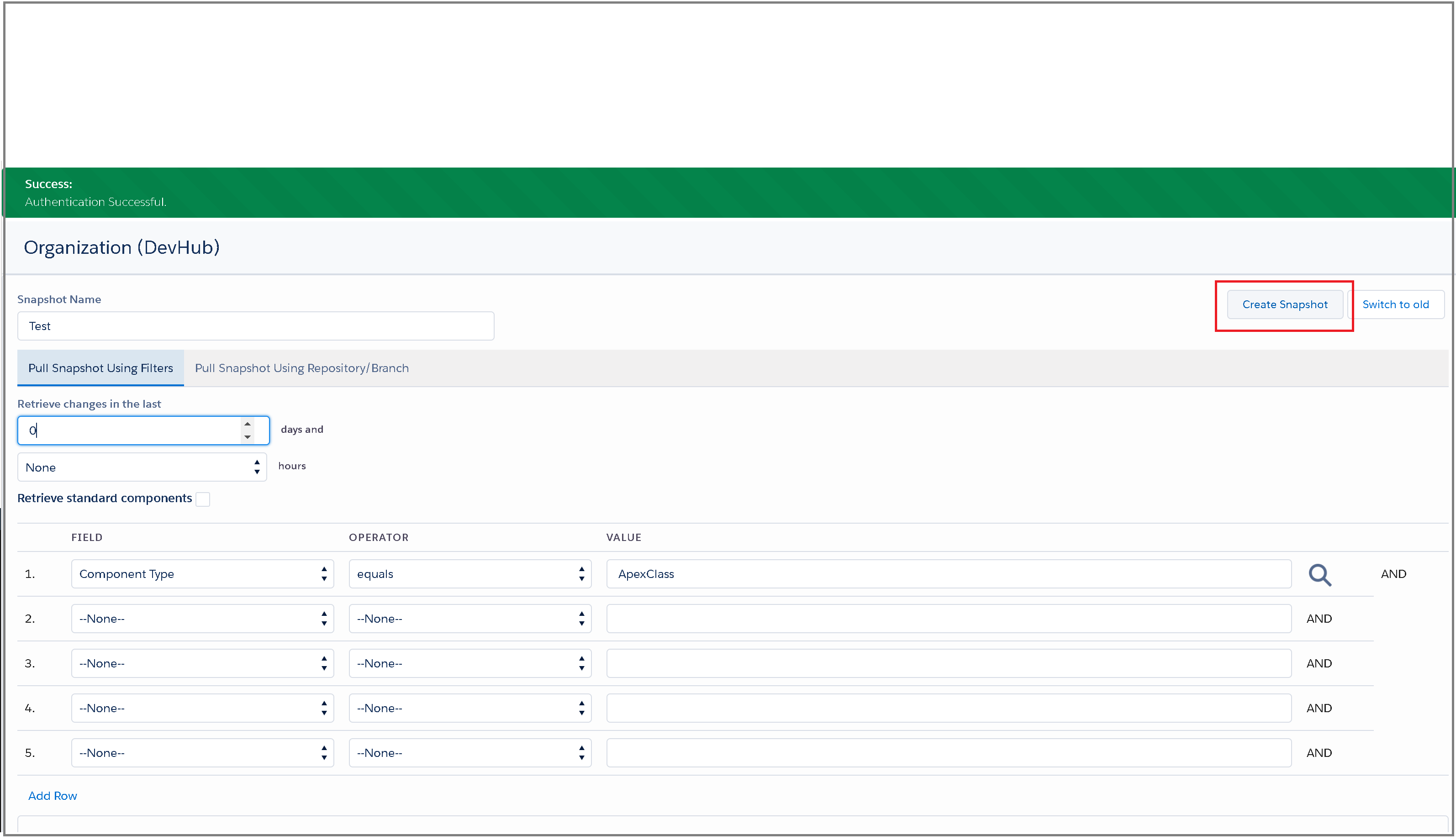

For testing purposes, we’ve kept it simple and changed the retrieval date to ‘0’ days to retrieve all components.

Once you are satisfied with the snapshot, click create snapshot in the top right corner.

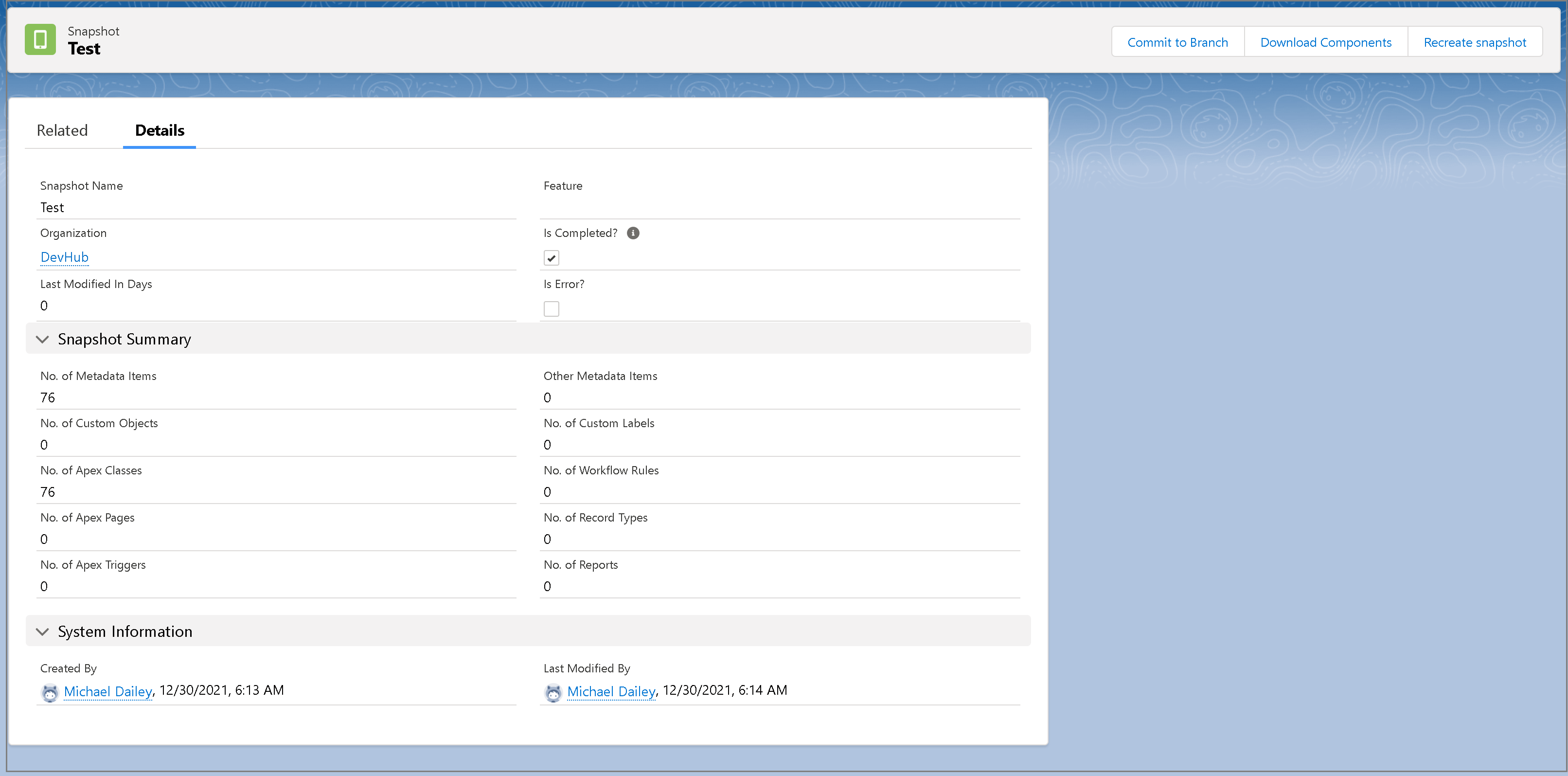

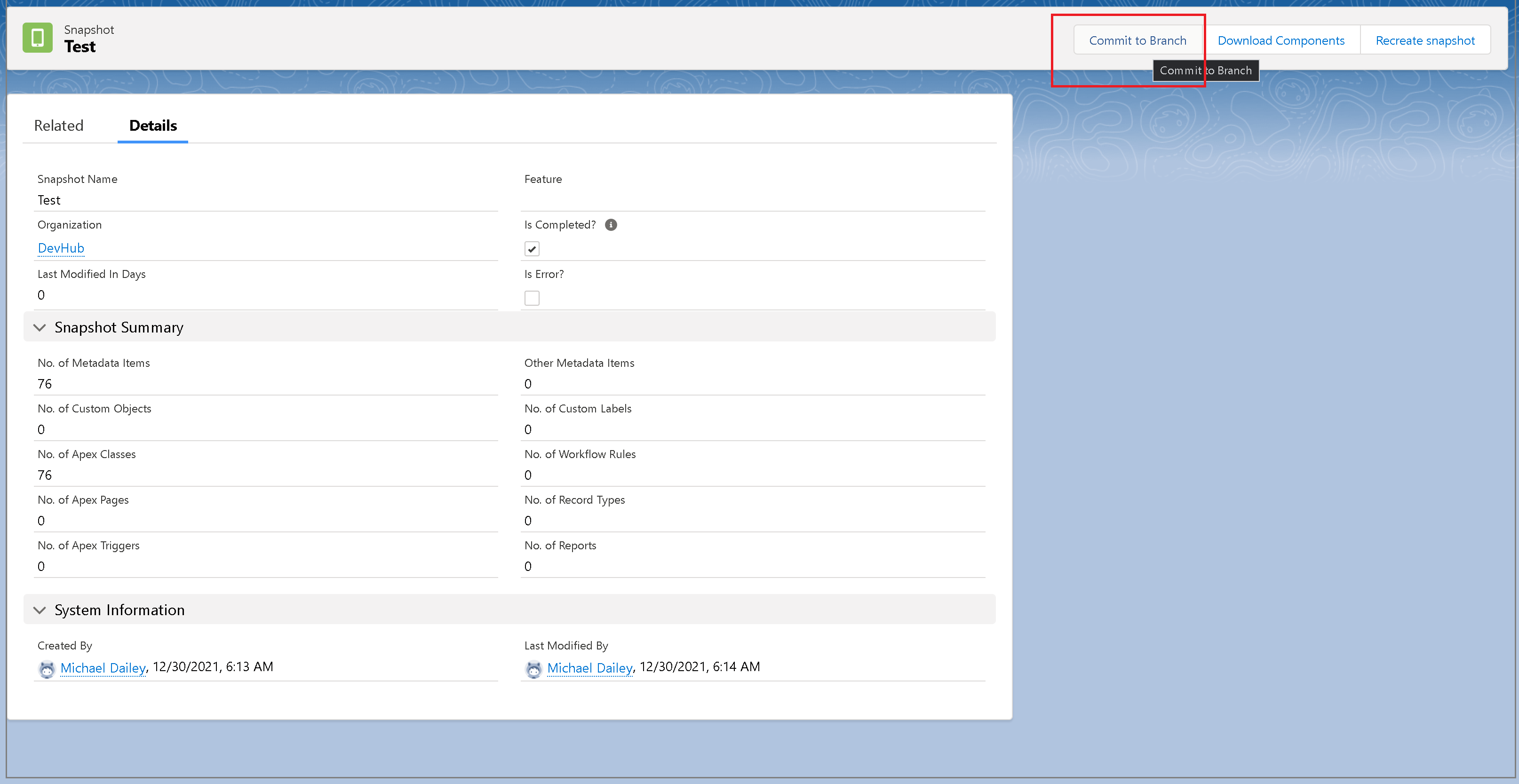

Give Flosum a minute or so to complete the snapshot process. You can track the status by refreshing the snapshot screen and checking the Is Completed? checkbox. Additionally, you’ll want to make sure that the No. of Metadata Items is greater than ‘0’.

That’s it! Your snapshot is created from the Org we registered previously and we are ready to commit it.

Committing to Branch

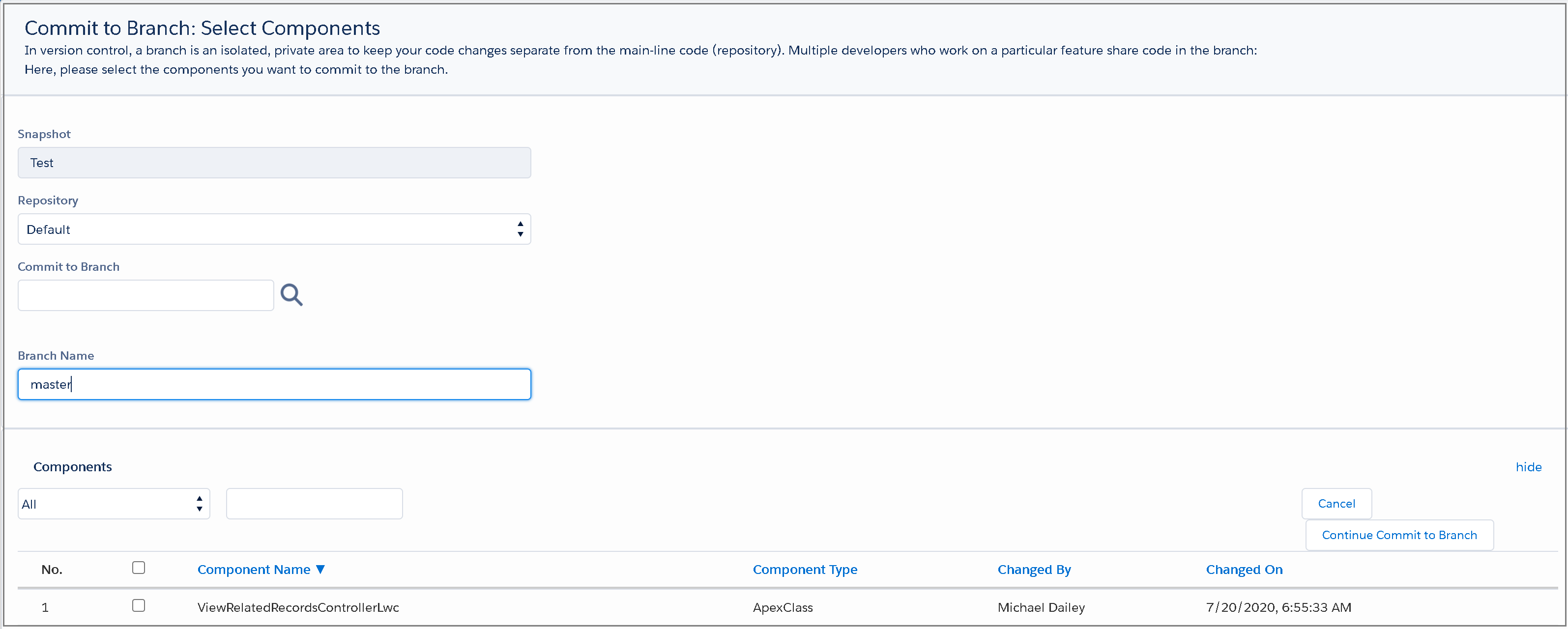

Once you are on the snapshot screen, the commit to branch step will open the next screen to select all components you’d like to commit.

A note here that all of this is being committed to the Flosum’s native Version Control System, and not your personal/company’s repository. It’s a great feature for storing all of this metadata information directly in your Flosum org and not having to set up your own Git repository just for this process.

If you do not have an existing branch in Flosum (which you can check by selecting the search icon next to the Commit to Branch field), then you can enter a name in the Branch Name field and Flosum will automatically create that branch for you.

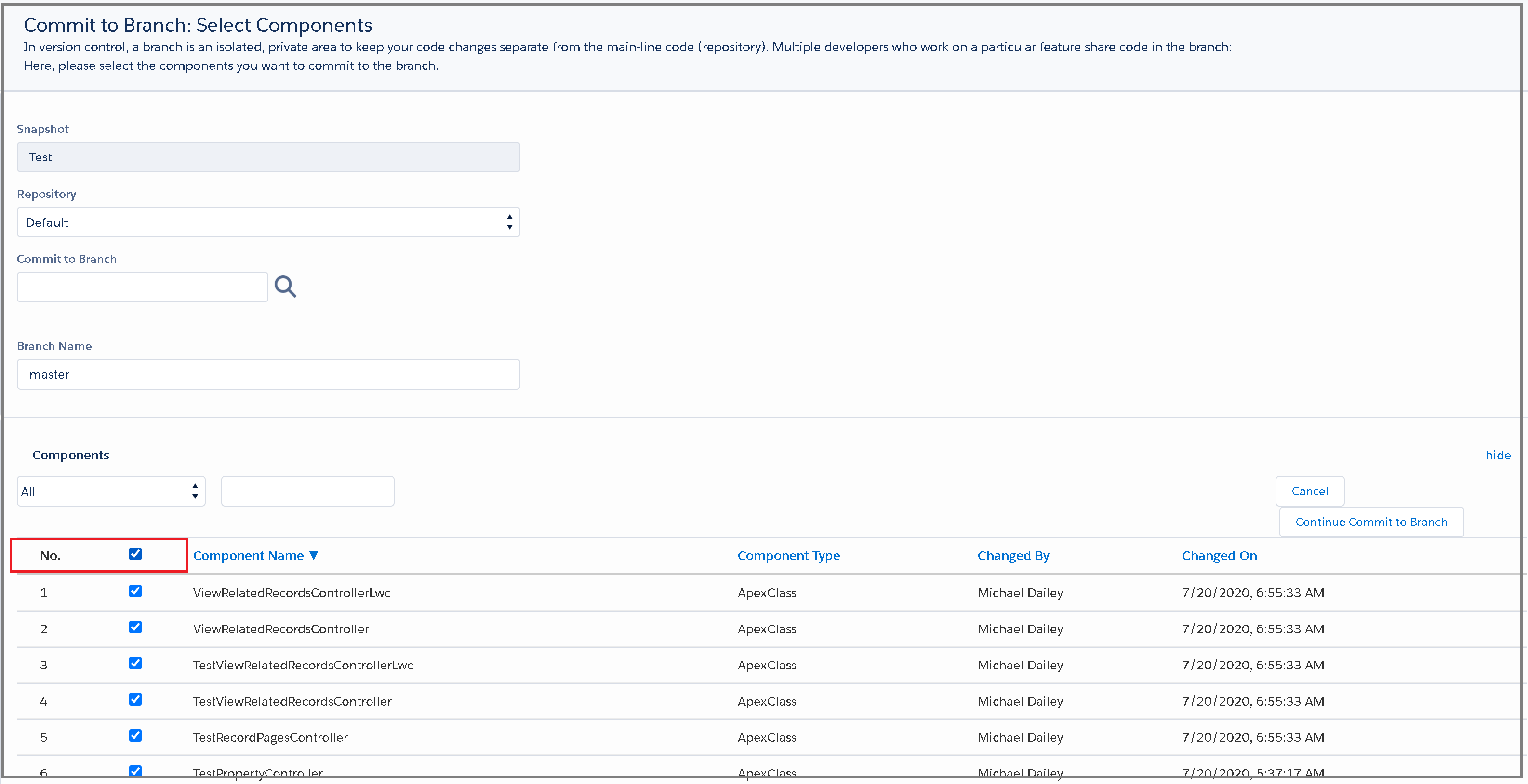

Next, you will select all of the components from the snapshot that you’d like to commit to the branch.

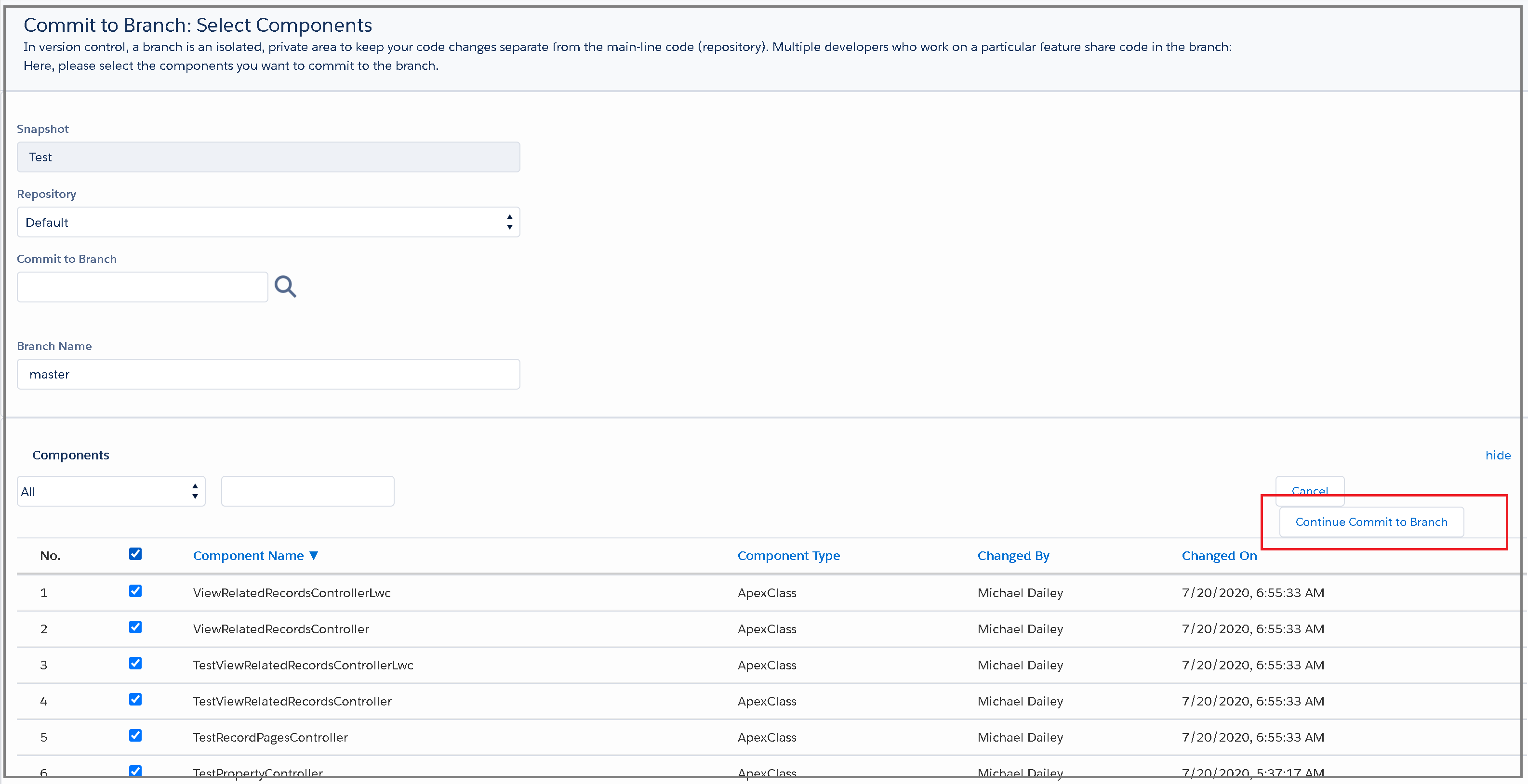

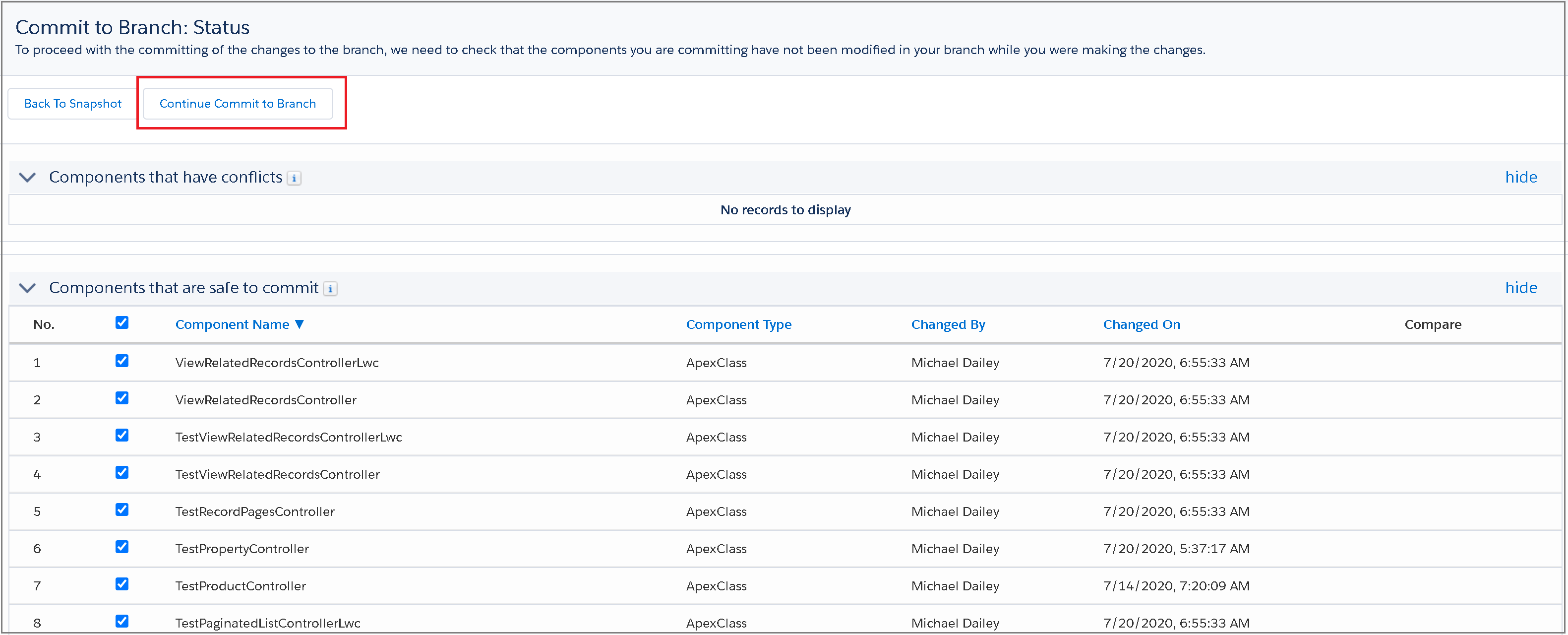

From there, select Continue Commit to Branch.

On the next screen, you need to verify all of the components you’d like to commit based on which components are considered safe to commit.

In this example, we’ve selected everything from our org snapshot. Once you’re happy with it, select Continue Commit to Branch to officially commit these components to the branch in Flosum.

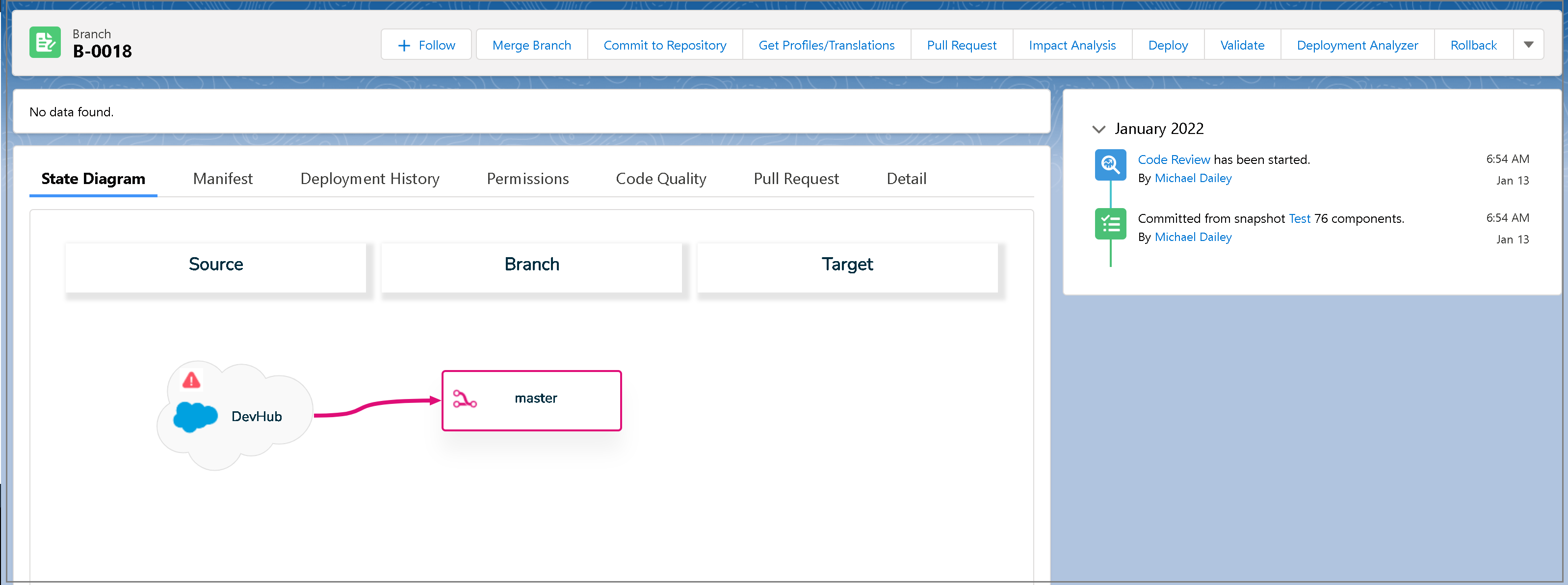

It will take Flosum a minute to process the commit, and then it will navigate you to the Branch view as shown below.

Now that you have a branch, we can begin setting up your Flosum Pipeline.

Creating a Webhook

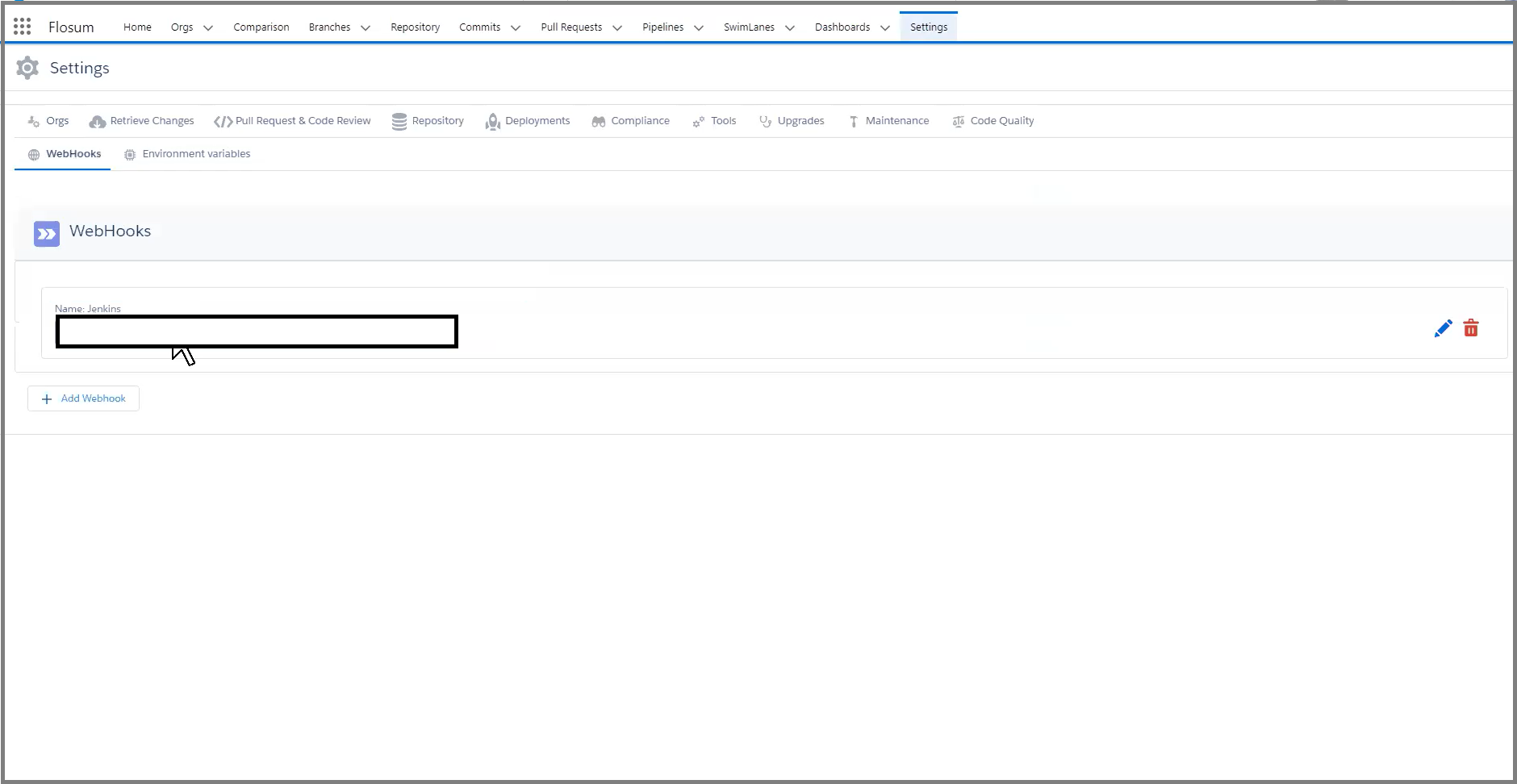

To create a webhook in Flosum, navigate to the Settings tab and select webhooks.

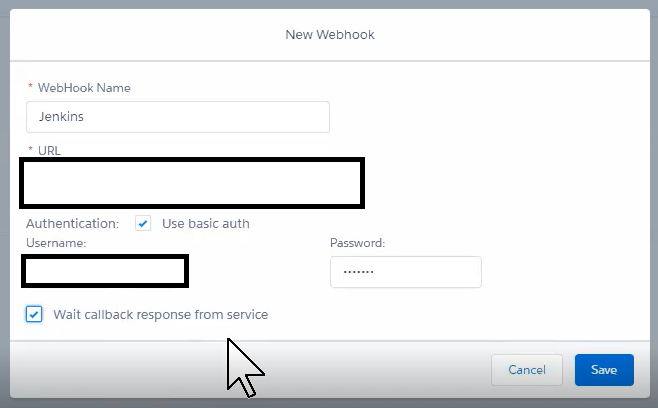

Click Add Webhook and enter in the Webhook Name, URL, and your Jenkins authentication. For the Jenkins authentication, the password must be replaced with a valid Jenkins API Token generated by the same user you are using to authenticate. This has to be set up properly from the Jenkins server prior to adding the webhook here.

The URL needs to be the build URL pointing to a specific Jenkins job. Please see the example given below.

Jenkins URL: https://demojenkins.com:8080

Job Name: FlosumTrigger

Build Parameters: TEST_PLAN=Regression, BUILD_FILE=build.xml

API Token Name: FlosumToken

Then the webhook URL would be the following:

https://demojenkins.com:8080/job/FlosumTrigger/buildWithParameters?token=FlosumToken&TEST_PLAN=Regression&BUILD_FILE=build.xml

You can also find this URL underneath the Build Triggers section on your Jenkins job.

A reminder here that the URL of the Jenkins server must be reachable from your Salesforce Org. If your Jenkins server is behind a proxy or firewall, then it needs to be opened up to allow traffic from Salesforce IP ranges.

Also, remember to check the Wait callback response from service to wait for the Jenkins webhook to return successfully before continuing to the next Pipeline step.

Now that the webhook is created, you can continue on to the pipeline creation.

Creating a Pipeline

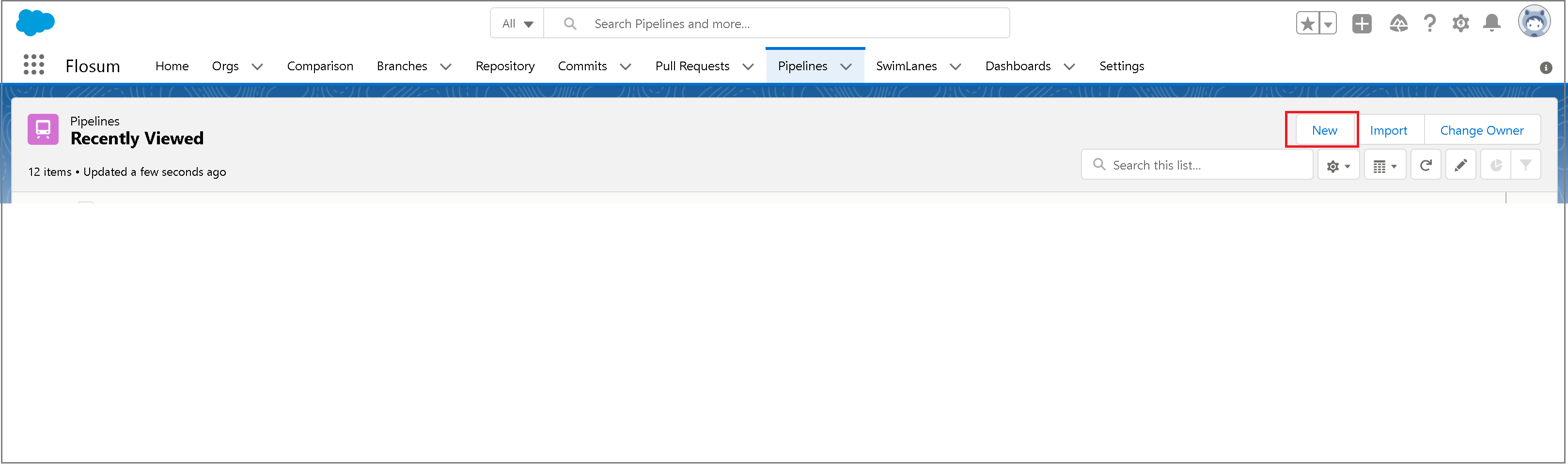

To create a Pipeline in Flosum, navigate to the Pipelines tab and select New.

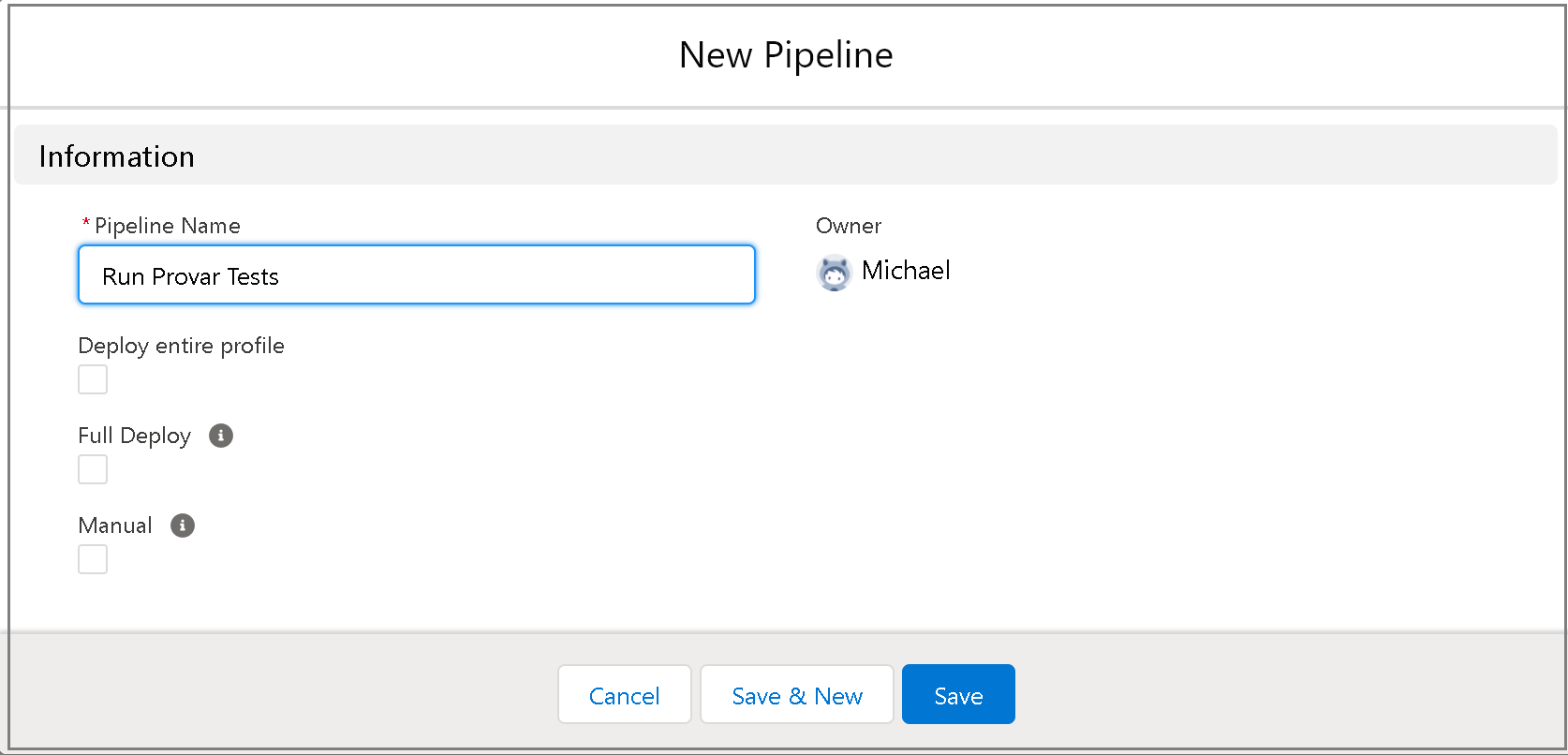

Enter the Pipeline Name, and leave the rest as the default.

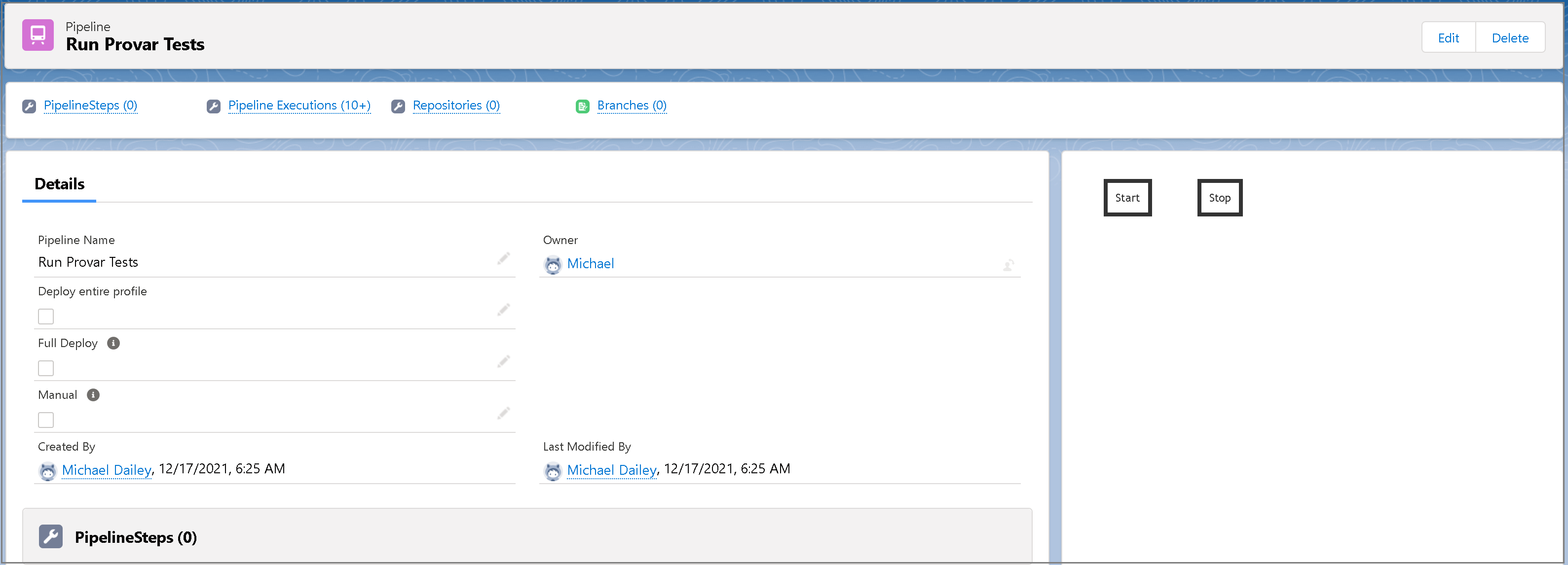

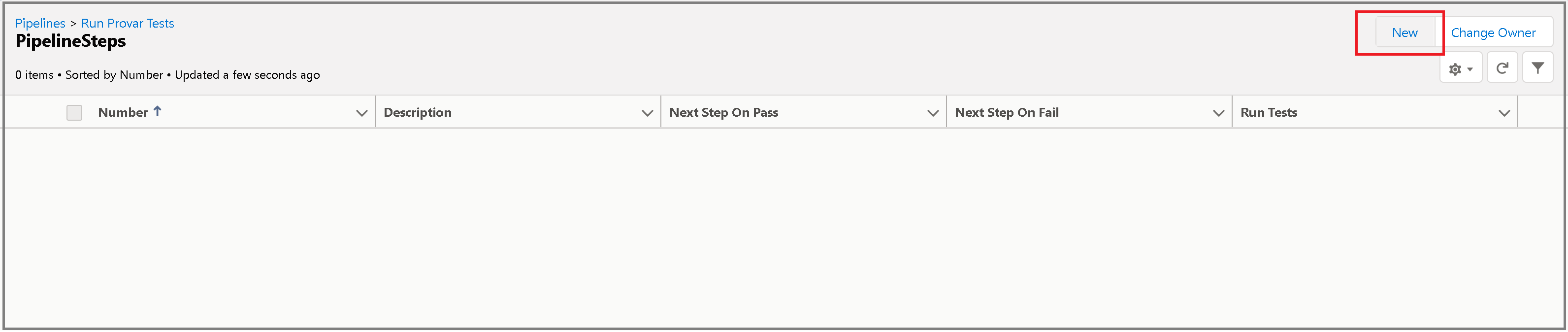

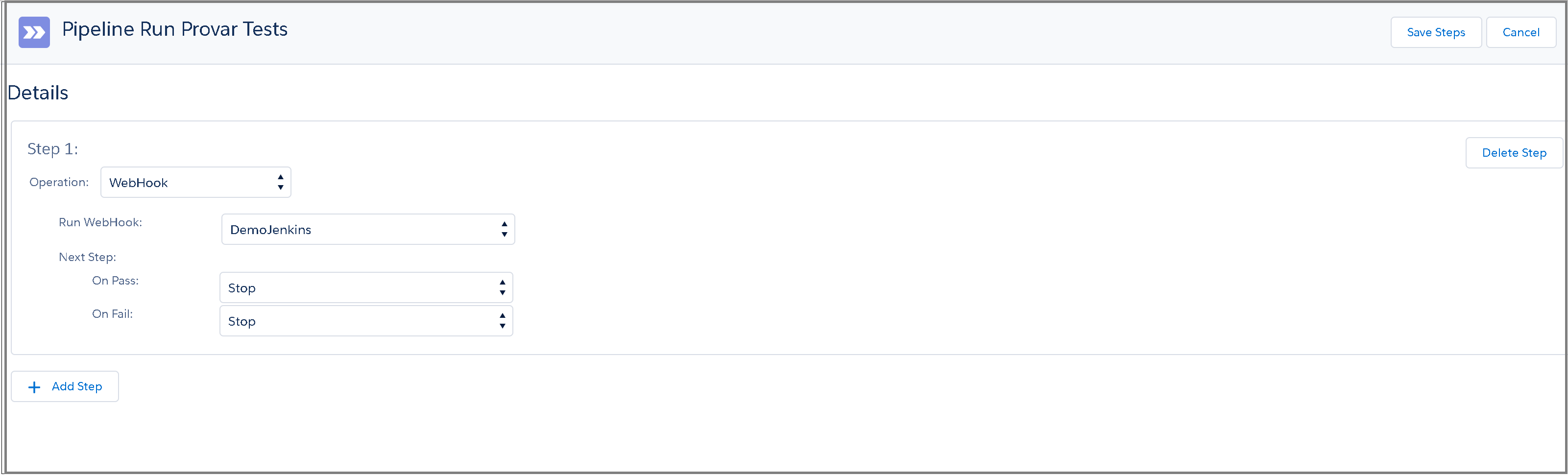

Next, you need to add some Pipeline steps. Select Pipeline Steps and once it loads, click New.

We’ve named our webhook Jenkins, so this is what the step looks like.

Since there is no next step here, the On Pass and On Fail fields can be left as Stop for now.

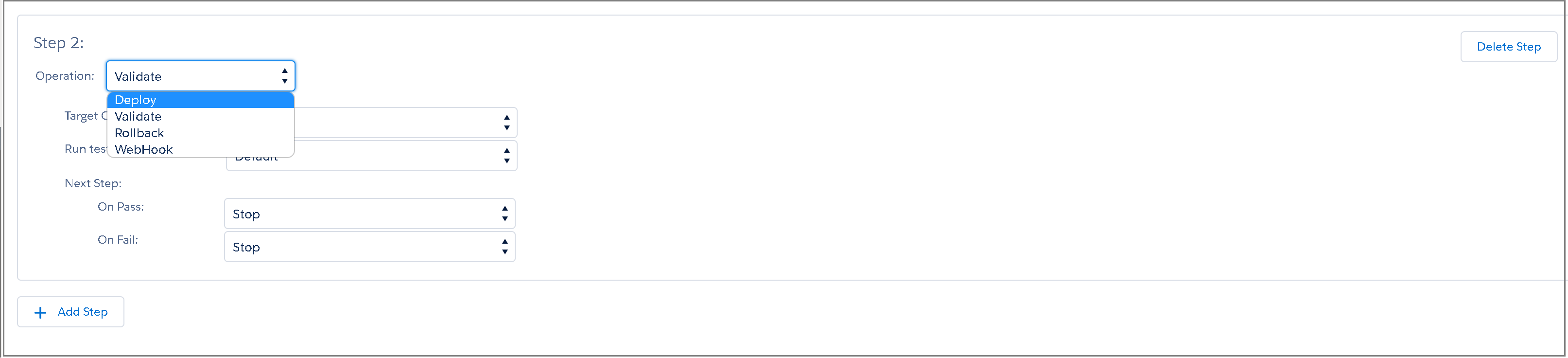

For the purposes of this integration, our pipeline only has a single step that will trigger our webhook to kick off our Automation tests. For a practical pipeline, you would have multiple pipeline steps that include deployment, validation, and rollback.

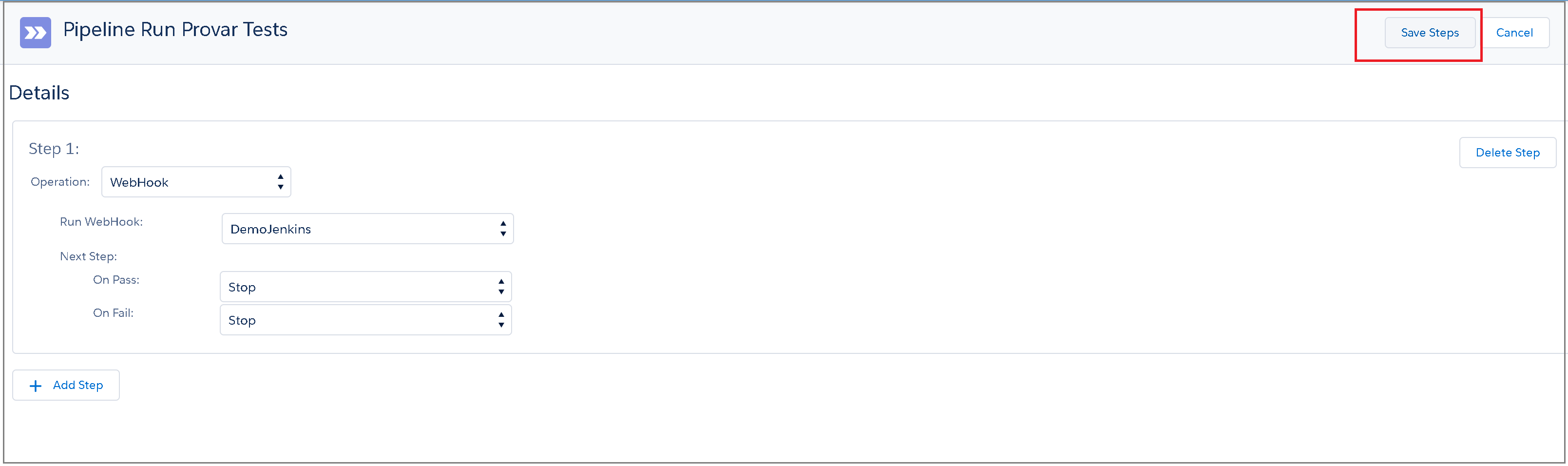

Once you’ve added all of the necessary Pipeline steps, click Save Steps.

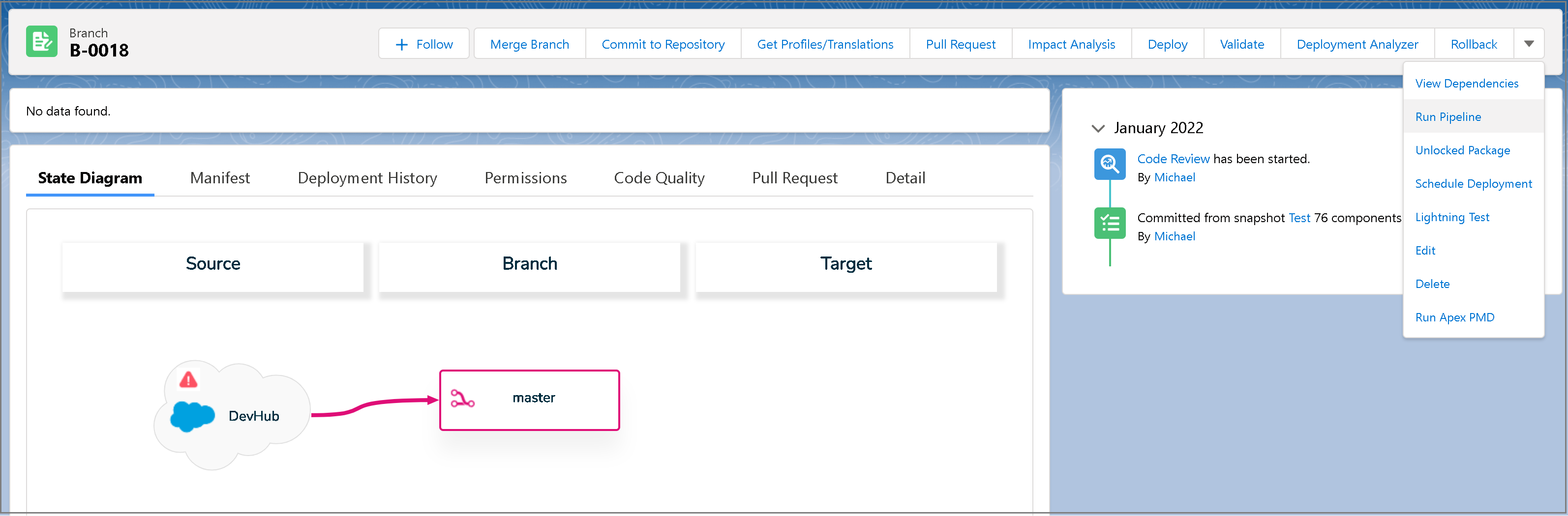

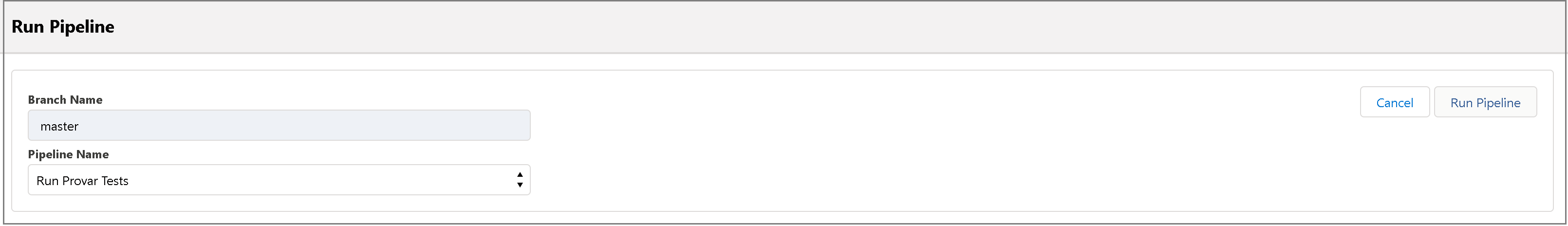

The Pipeline is set up and is ready to be queued from the Branch we created earlier. Navigate to the Branch view screen for the branch we committed to earlier. From the branch, we can run the Pipeline directly.

Select the Pipeline you just created.

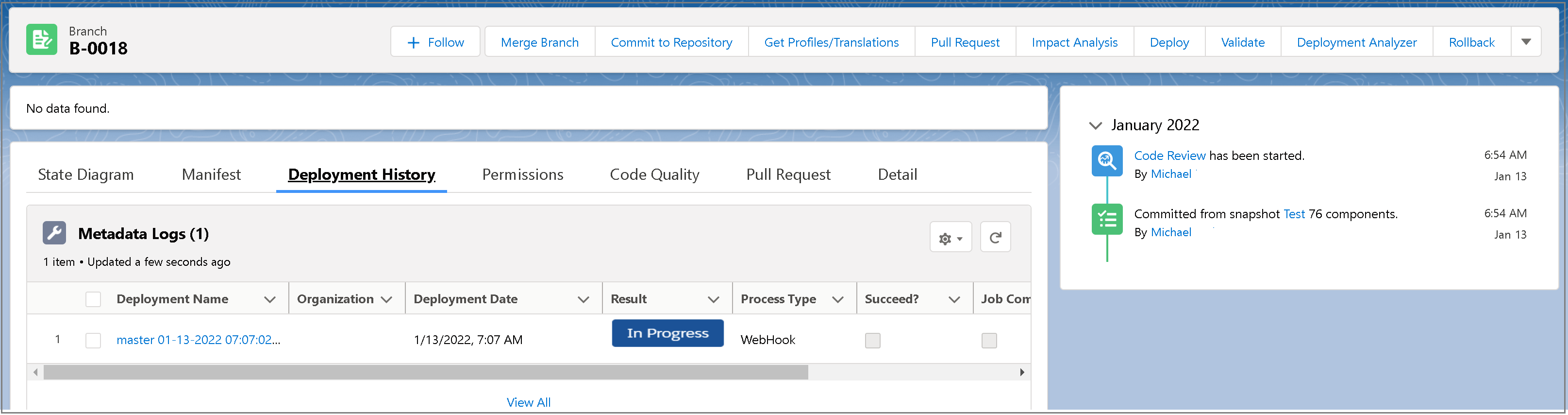

You can view the Pipeline results from the Pipeline Executions related list from the Pipeline view, or you can view the Deployment History from the branch view.

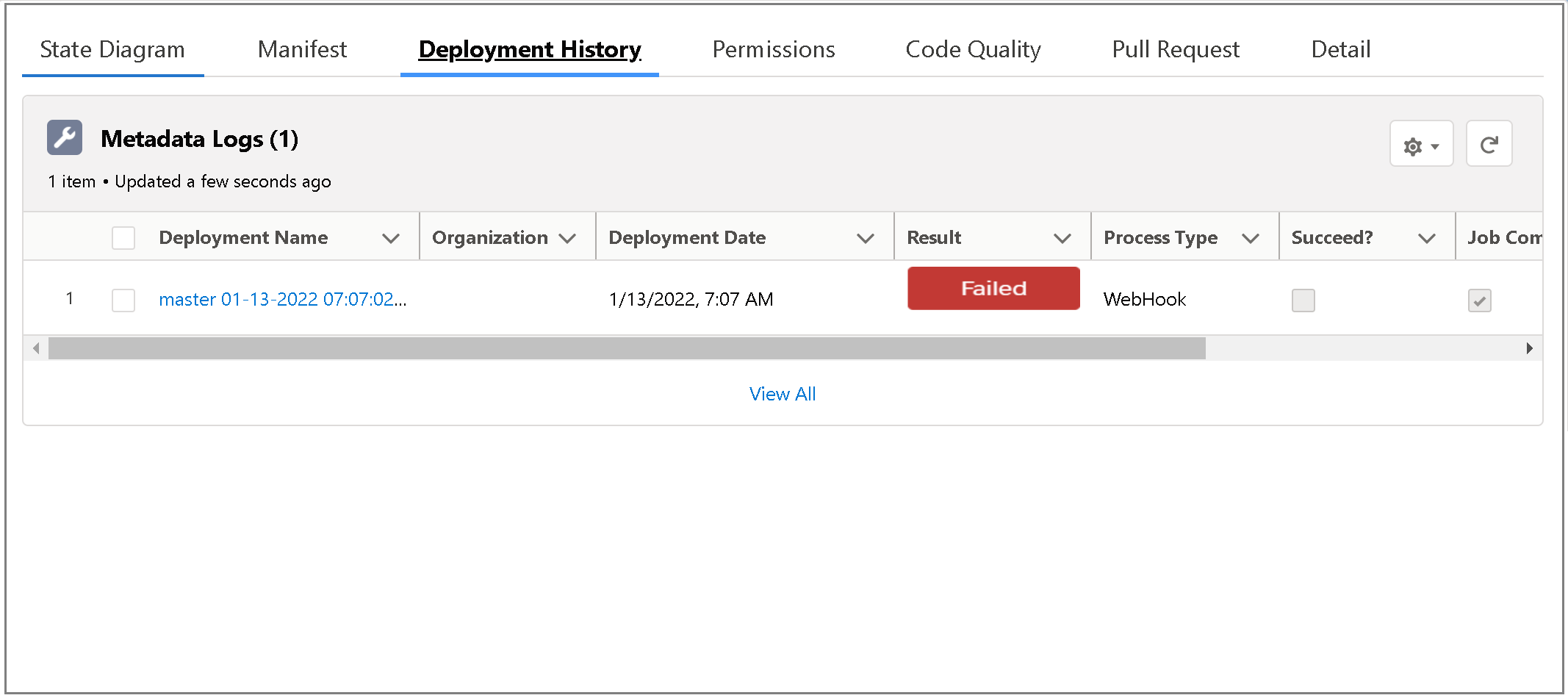

If you navigate to the Deployment History >Metadata Logs you’ll see the result of each Pipeline execution.

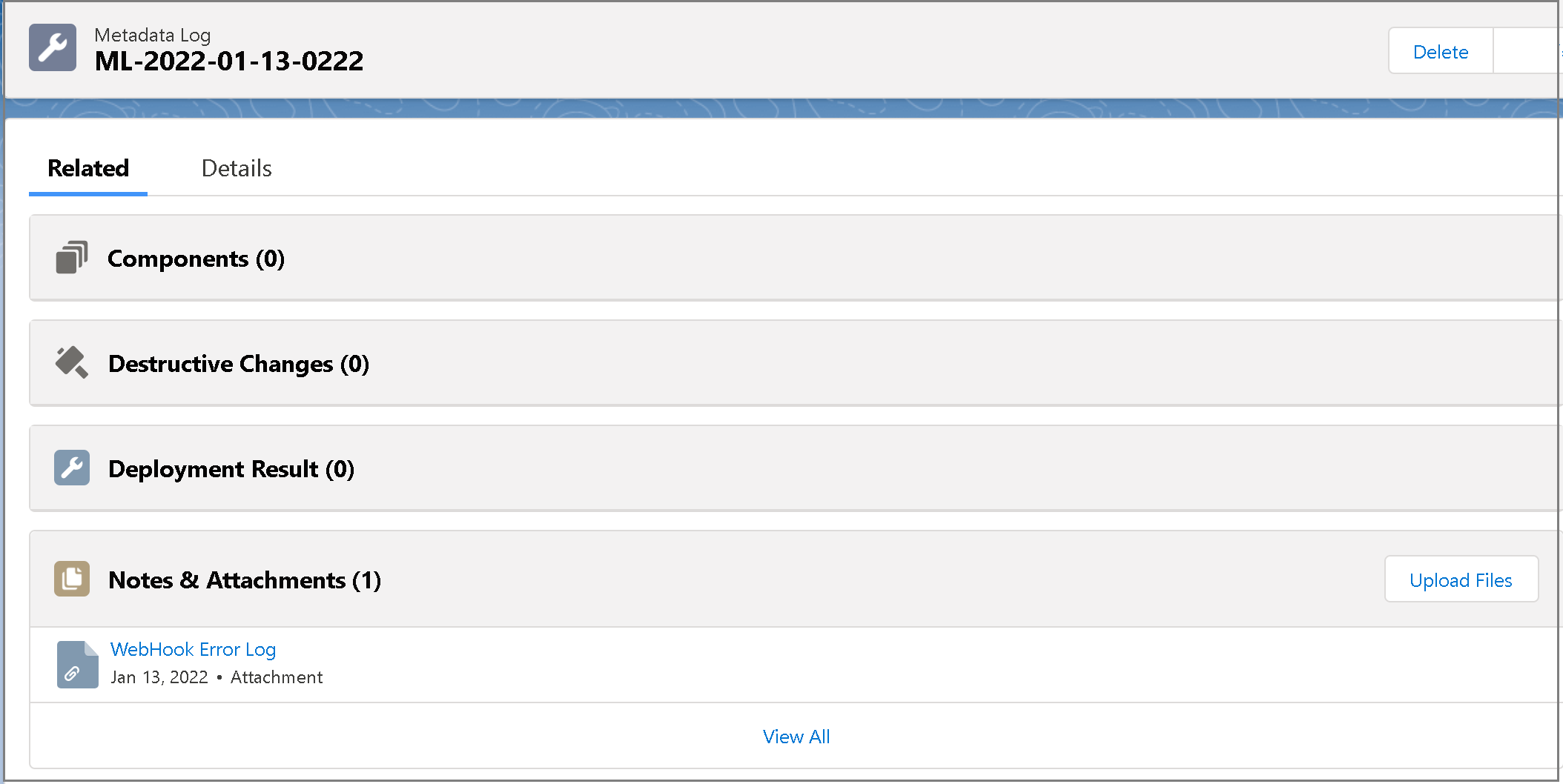

Navigate to one of these Metadata Logs, and the Related tab will show any relevant error logs in the Notes & Attachments related list.

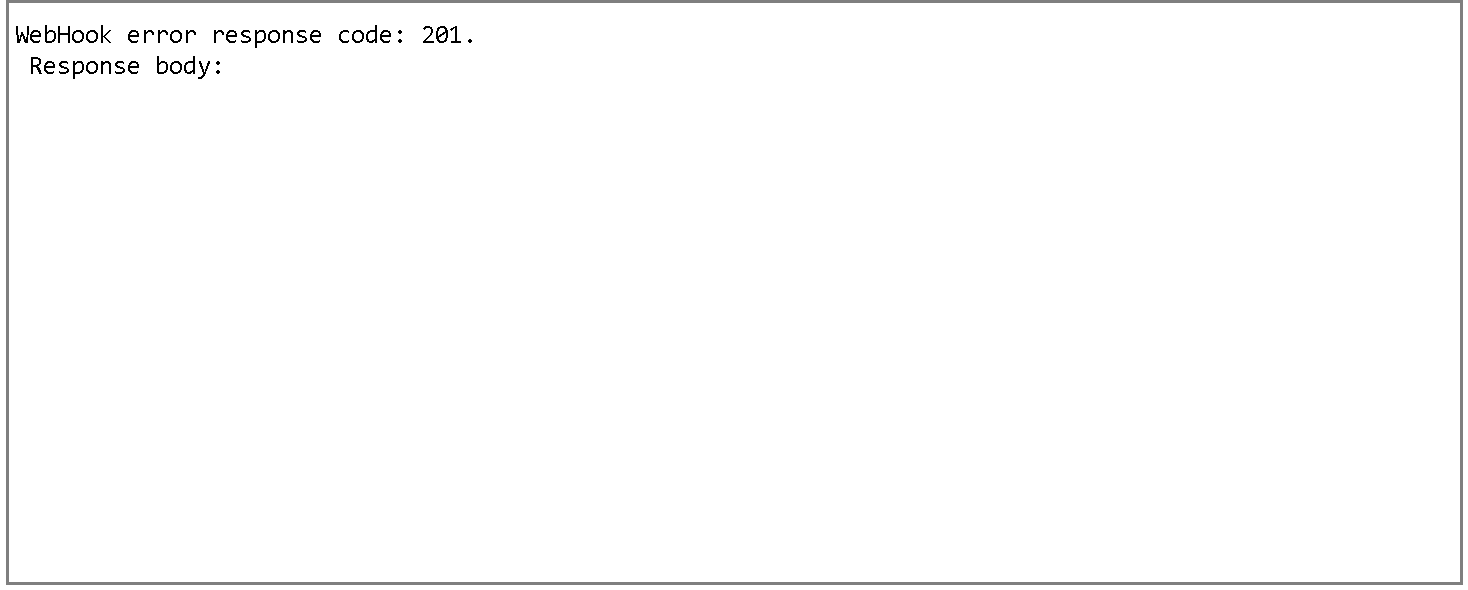

Note: This webhook step does not wait for the Jenkins job itself to complete, but rather just the callback response to return. Currently, there is an error in Flosum that shows the deployment step as failed if the callback response is a 201 (which is what Jenkins returns when successfully triggering a job).

This will be the attached log, and the deployment history and pipeline step execution will both show as failed, even though the call was successful.

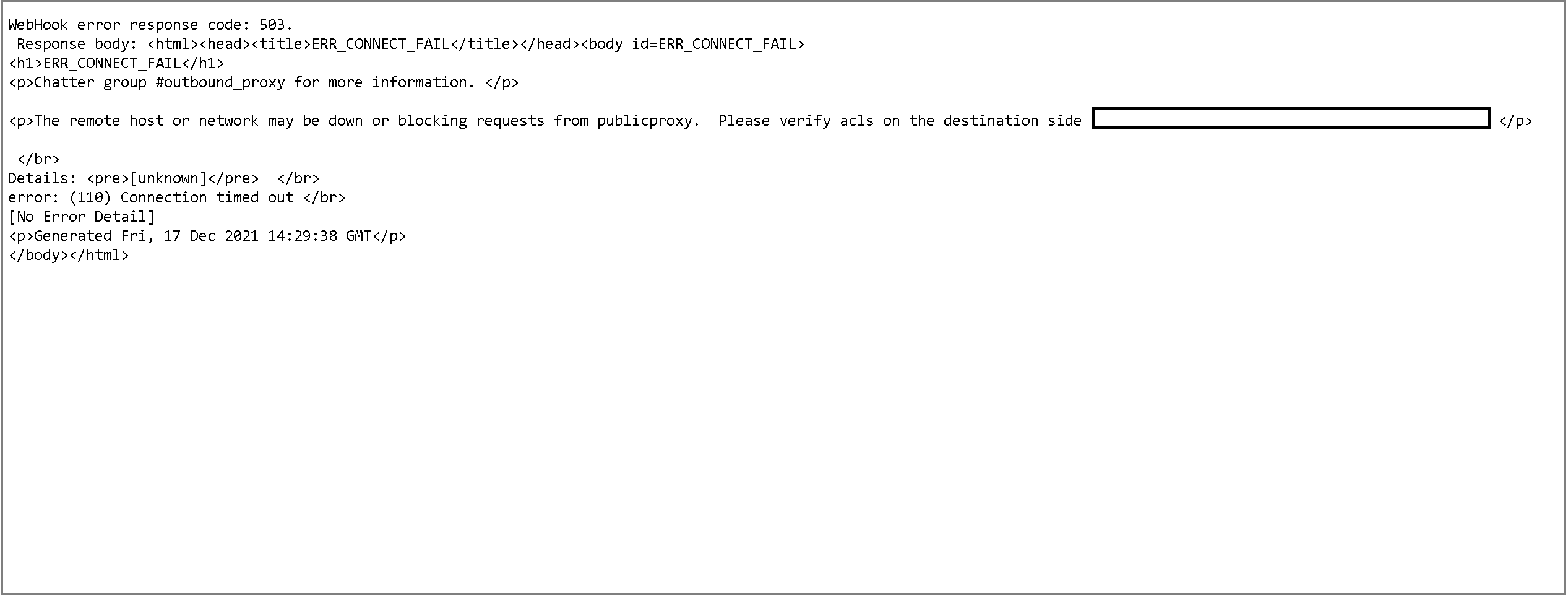

If you are queuing your pipeline and do not see anything occur on the Jenkins side, then you might be getting a timeout due to a network firewall blocking the connection. If it is blocked, you can check the deployment history metadata logs for a similar message.

You may also see the following error if your webhook URL is malformed or not sending properly: webhook error: Read timed out.

For a more detailed view of the results of the tests, you’ll have to navigate to the Jenkins job itself. You can also configure your build.xml in Automation to email test reports once completed.

- Home

- Get Started with V2

- Using Provar

- Understanding Provar’s Use of AI Service for Test Automation

- Provar Automation

- Creating a New Test Project

- Import Test Project from a File

- Import Test Project from a Remote Repository

- Import Test Project from Local Repository

- Commit a Local Test Project to Source Control

- Salesforce API Testing

- Behavior-Driven Development

- Consolidating Multiple Test Execution Reports

- Creating Test Cases

- Custom Table Mapping

- Functions

- Debugging Tests

- Defining a Namespace Prefix on a Connection

- Defining Proxy Settings

- Environment Management

- Exporting Test Projects

- Exporting Test Cases into a PDF

- Japanese Language Support

- Override Auto-Retry for Test Step

- Customize Browser Driver Location

- Mapping and Executing the Lightning Article Editor in Provar

- Managing Test Steps

- Namespace Org Testing

- NitroX

- Provar Test Builder

- ProvarDX

- Refresh and Recompile

- Reintroduction of CLI license Check

- Reload Org Cache

- Reporting

- Running Tests

- Searching Provar with Find Usages

- Secrets Management and Encryption

- Setup and Teardown Test Cases

- Tags and Service Level Agreements (SLAs)

- Test Cycles

- Test Plans

- Testing Browser – Chrome Headless

- Testing Browser Options

- Tooltip Testing

- Using the Test Palette

- Using Custom APIs

- Callable Tests

- Data-Driven Testing

- Page Objects

- Block Locator Strategies

- Introduction to XPaths

- Creating an XPath

- JavaScript Locator Support

- Label Locator Strategies

- Maintaining Page Objects

- Mapping Non-Salesforce Fields

- Page Object Operations

- ProvarX™

- Refresh and Reselect Field Locators in Test Builder

- Using Java Method Annotations for Custom Objects

- Applications Testing

- Database Testing

- Document Testing

- Email Testing

- Email Testing in Automation

- Email Testing Examples

- Gmail Connection in Automation with App Password

- App Configuration for Microsoft Connection in MS Portal for OAuth 2.0

- OAuth 2.0 Microsoft Exchange Email Connection

- Support for Existing MS OAuth Email Connection

- OAuth 2.0 MS Graph Email Connection

- Create a Connection for Office 365 GCC High

- Mobile Testing

- OrchestraCMS Testing

- Salesforce CPQ Testing

- ServiceMax Testing

- Skuid Testing

- Vlocity API Testing

- Webservices Testing

- DevOps with V2

- Introduction to Provar DevOps

- Introduction to Test Scheduling

- Apache Ant

- Configuration for Sending Emails via the Automation Command Line Interface

- Continuous Integration

- AutoRABIT Salesforce DevOps in Provar Test

- Azure DevOps

- Running a Provar CI Task in Azure DevOps Pipelines

- Configuring the Automation Secrets Password in Microsoft Azure Pipelines

- Parallel Execution in Microsoft Azure Pipelines using Multiple build.xml Files

- Parallel Execution in Microsoft Azure Pipelines using Targets

- Parallel Execution in Microsoft Azure Pipelines using Test Plans

- Bitbucket Pipelines

- CircleCI

- Copado

- Docker

- Flosum

- Gearset

- GitHub Actions

- Integrating GitHub Actions CI to Run Automation CI Task

- Remote Trigger in GitHub Actions

- Parameterization using Environment Variables in GitHub Actions

- Parallel Execution in GitHub Actions using Multiple build.xml Files

- Parallel Execution in GitHub Actions using Targets

- Parallel Execution in GitHub Actions using Test Plan

- Parallel Execution in GitHub Actions using Job Matrix

- GitLab Continuous Integration

- Travis CI

- Jenkins

- Execution Environment Security Configuration

- Provar Jenkins Plugin

- Parallel Execution

- Running Provar on Linux

- Reporting

- Salesforce DX

- Git

- Version Control

- Salesforce Testing

- Recommended Practices

- Salesforce API Access Control Security Update – Impact on Provar Connections

- Salesforce Connection Best Practices

- Improve Your Metadata Performance

- Java 21 Upgrade

- Testing Best Practices

- Automation Planning

- Supported Testing Phases

- Provar Naming Standards

- Test Case Design

- Create records via API

- Avoid using static values

- Abort Unused Test Sessions/Runs

- Avoid Metadata performance issues

- Increase auto-retry waits for steps using a global variable

- Create different page objects for different pages

- The Best Ways to Change Callable Test Case Locations

- Working with the .testProject file and .secrets file

- Best practices for the .provarCaches folder

- Best practices for .pageObject files

- Testing Best Practices

- Troubleshooting with V2

- How to Use Keytool Command for Importing Certificates

- Browsers

- Configurations and Permissions

- Add Permissions to Edit Provar.ini File

- Configure Provar UI in High Resolution

- Enable Prompt to Choose Workspace

- Increase System Memory for Provar

- Refresh Org Cache Manually

- Show Hidden Provar Files on Mac

- Java Version Mismatch Error

- Unable to create test cases, test suites, etc… from the Test Project Navigation sidebar

- Connections

- DevOps with V2

- Error Messages

- Provar Manager 3.0 Install Error Resolution

- Provar Manager Test Case Upload Resolution

- Administrator has Blocked Access to Client

- JavascriptException: Javascript Error

- Resolving Failed to Create ChromeDriver Error

- Resolving Jenkins License Missing Error

- Resolving Metadata Timeout Errors

- Test Execution Fails – Firefox Not Installed

- Selenium 4 Upgrade

- Licensing and Installation

- Memory

- Test Builder

- V2 Release Notes