Provar Test Case Generation Setup & Usage Guide

Perhaps one of the most requested and demanded features for anyone designing and authoring Provar tests is the ability to generate Provar test cases with the latest advancements in AI.

We are excited to share that we have officially launched the beta version for our latest feature, Intelligent Test Case Generation with Provar.

This initial version comes with many advanced capabilities and helps us lay the groundwork for more test case generation enhancements in the future.

Goals & Expectations

Our goal is to enable users to more efficiently generate Provar test cases for common scenarios and reduce the manual burden of the initial test authoring process significantly. Using AI to generate automation-ready Provar test cases is an incredibly complex, multi-step process that involves multiple models, phases, several context layers, and RAG-based Knowledge Base retrieval for additional grounding. As such, the expectation is not that every single test case generated by AI will be 100% ready for usage immediately. Our target is more around the 80% zone for “readiness”. This means that most test cases generated will require some manual tweaking before successful execution.

Intelligent Test Case Generation (beta) does not include Page Object Generation or locator usage for test cases. This will be included in a future release. Currently, scenarios are focused on Salesforce flows and all supporting test steps.

Disclaimers, Data, & Security Information

AI Disclaimer

This feature uses Generative AI to generate Provar test cases. While we strive for accuracy and relevance, the generated content may not always be correct, complete, or suitable for every situation. Please review and verify the information before use and avoid inputting sensitive, confidential, or personally identifiable information.

As such, AI-generated Provar test cases are not meant to be executed out-of-the-box, and may require additional configuration in Provar Automation before tests can be run successfully.

Data Usage & Retention Policy

Intelligent Test Case Generation utilizes your Org’s context if provided as part of the Test Intent. Whatever information you attach to the Test Intent (form to generate test cases) can be monitored from the user’s end in the Test Intents object. We do not permanently store or train on any data passed into test case generation in any way, shape, or form. Throughout the duration of the beta, the logs are set to be stored for 7 days to allow the team time to resolve any issues that arise during the beta process. These issues are focused on improving test case validations and structured fixes to the generation process. They will not have any tie-in to user data or metadata passed into the context window.

Security

Provar utilizes a secure AWS cloud stack for all API-based integrations outside of Salesforce. Connections are made securely through HTTPS and with properly configured credentials managed solely by our team. We do not use any Connected Apps from outside of your Salesforce Org, and the only connections made directly to any Org are made at the discretion of the user explicitly. We are connecting to our AWS Infrastructure in the same manner as Provar Automation regarding AI features used.

Get Started in 5 Steps

The steps to getting started with Provar Test Case Generation are as follows:

- Install Provar Quality Hub 3.24.0+

- Enable Provar Test Case Generation feature

- Connect to System(s) and import Metadata Components

- Connect to JIRA/ADO and import user stories and work items

- Generate context-aware test cases for Provar Automation!

Setup & Configuration

To get started, all you need to do is upgrade your Provar Quality Hub version to 3.24.0 or higher, which contains the disabled-by-default Provar Test Case Generation feature set.

If you’d like to try out this feature in a lower-level environment, feel free to install version 3.24.0 or higher in a Sandbox/testing environment.

Once installed, you can enable the Provar Test Case Generation feature in the Provar Quality Hub Setup app.

Note: Your org may still refer to Provar Quality Hub as Provar Manager, as name changes to Applications cannot be pushed to subscriber orgs.

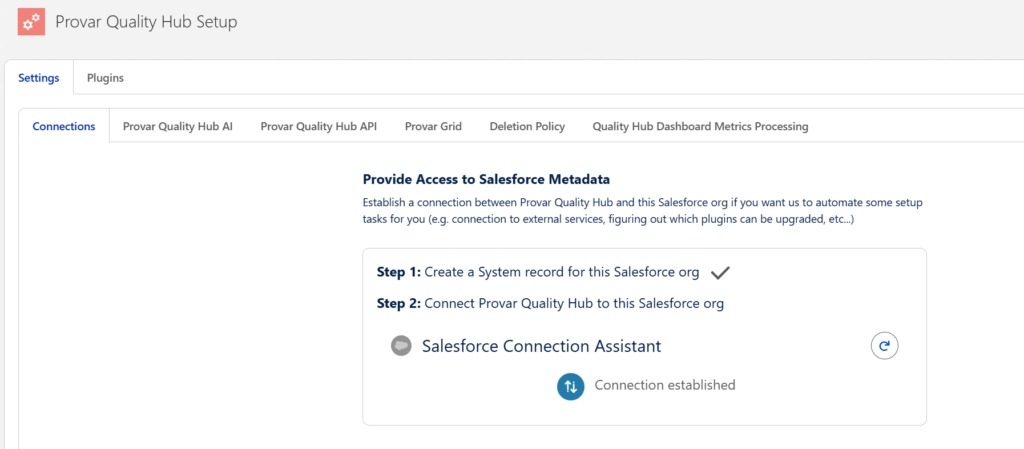

Before you can enable or modify any of the Provar Quality Hub Settings, you’ll need to establish a connection to this org using the Provar Quality Hub Setup > Settings > Connections tab.

Follow the steps to Provide Access to Salesforce Metadata to the Quality Hub application.

This simply allows the managed package to utilize your org’s metadata directly in the org. No data is being sent or externally processed yet.

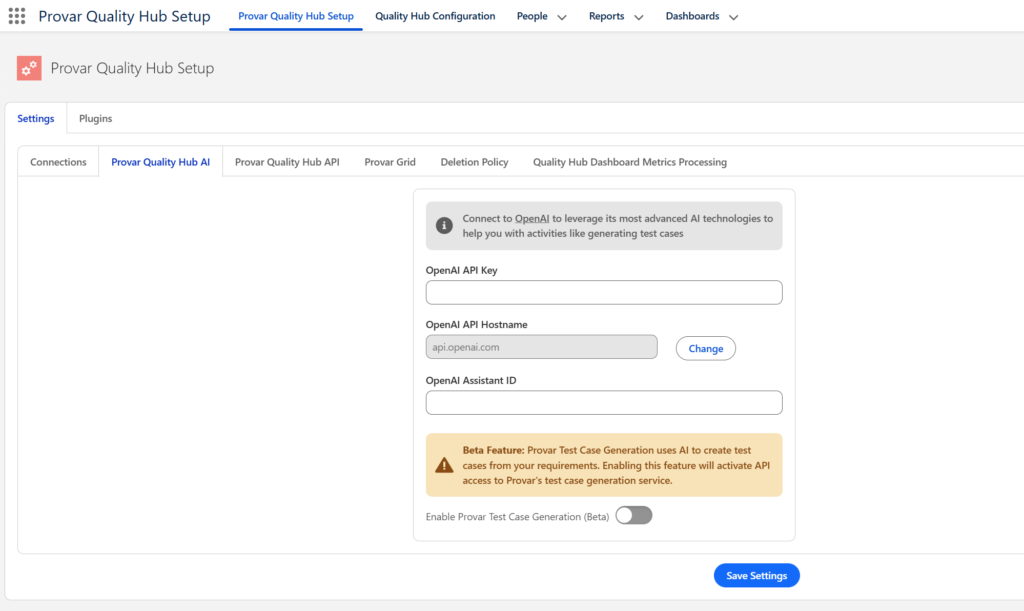

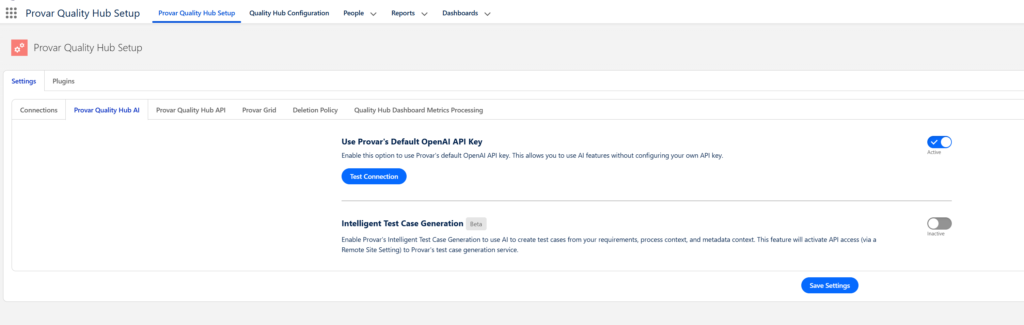

Once you have this connection established, navigate to Provar Quality Hub Setup > Settings > Provar Quality Hub AI.

3.24.0 UI

3.25.0+ UI

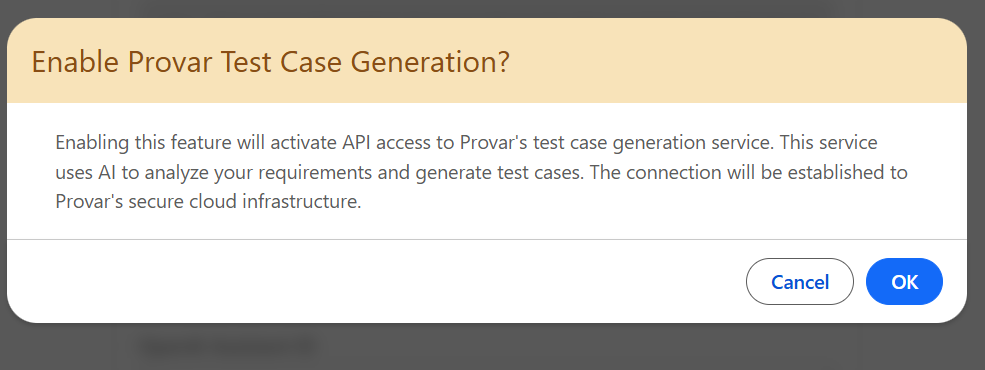

Enable the toggle option, then confirm in the follow-up screen.

Click Save Settings and the Test Case Generation feature will now be enabled.

Note: During the beta, this activates a Remote Site Setting (Provar_TCG_API) to connect directly to our AWS API Gateways. In future versions, this will be updated to route to our Provar Cloud instead, operating similarly to the way Provar Automation makes API callouts in its AI features.

Importing Context Items

Before Provar test case generation can be really effective and accurate, users are recommended to import their context items into Provar Quality Hub. There are two primary context items used by the test case generation: issues (user stories) and metadata components.

User Story Preparation

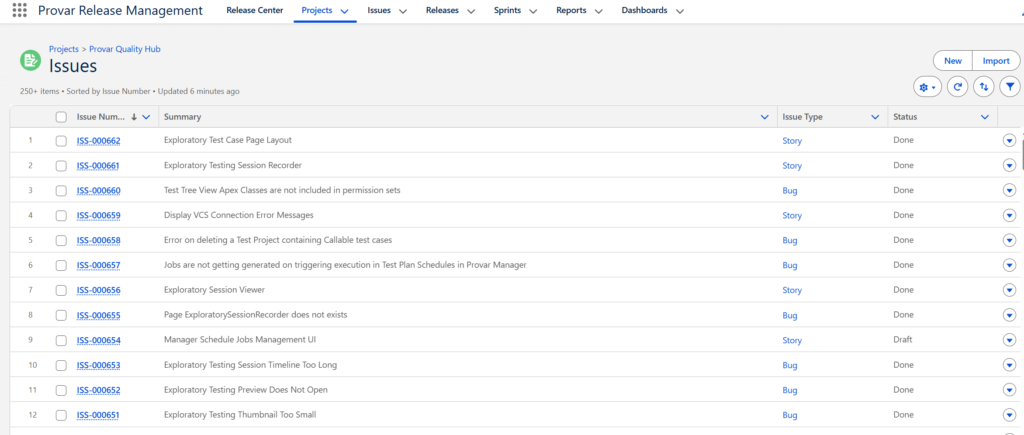

It is highly recommended that users who use Jira or Azure DevOps install those plugins and import their projects and issues into Provar Quality Hub.

More information can be found in their respective documentation on how to import projects and items from Jira or Azure DevOps.

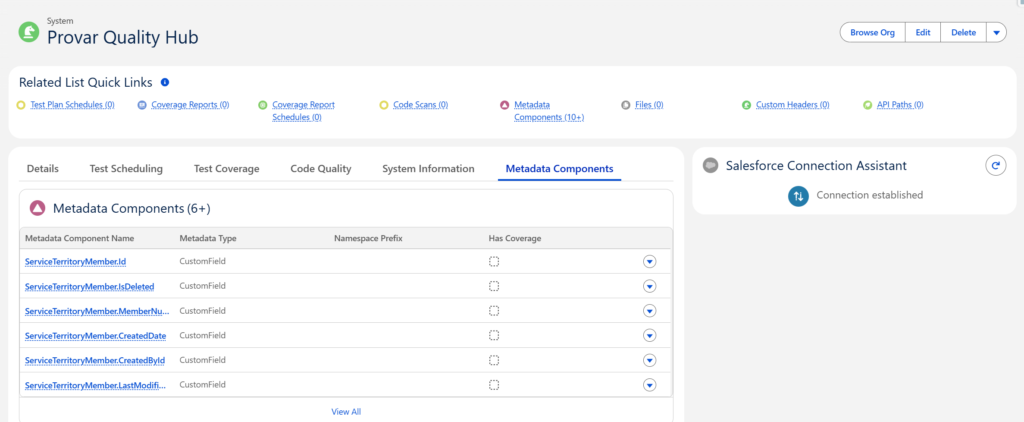

Metadata Preparation

Quality Hub supports importing the most commonly used Metadata Types for testing and coverage metrics.

For more information on how to connect to various Salesforce Orgs and import metadata components as context, see the full documentation page here.

Once you have imported some Metadata Components and Issues from either Jira or Azure DevOps, then you are ready to begin generating context-aware test cases!

Using Provar Test Case Generation

Now that there is a solid context repository to pull from, generating test cases in Quality Hub for Provar Automation is straightforward.

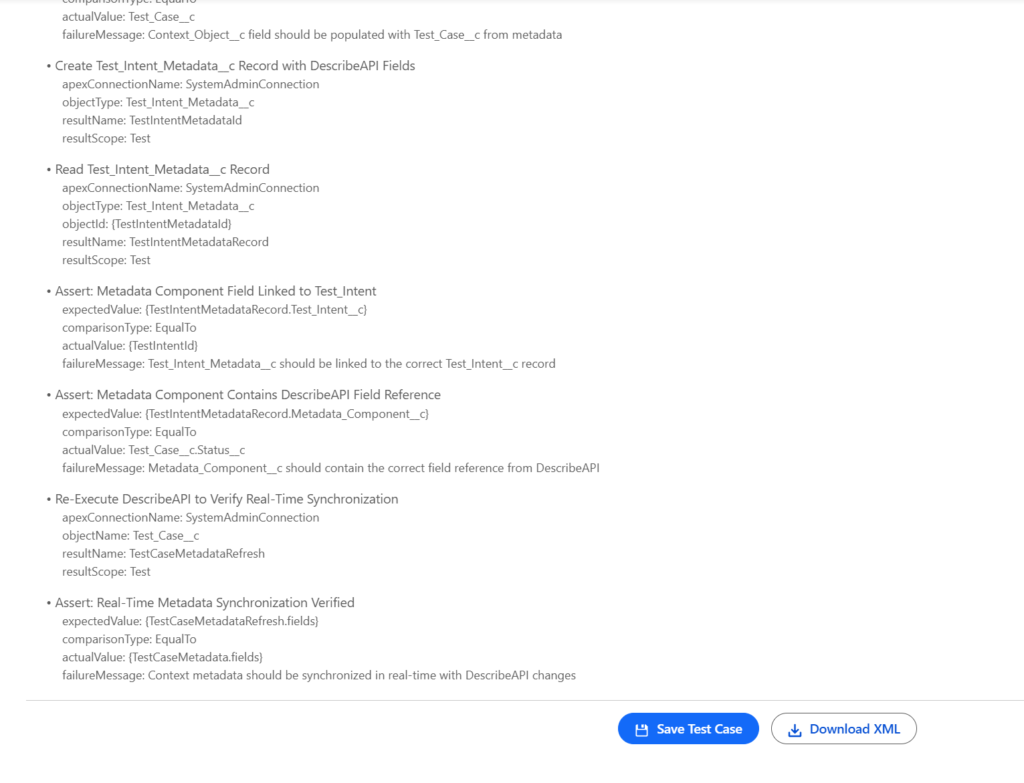

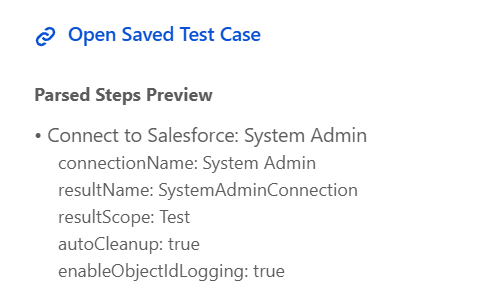

The basic structure of most generated test cases is something like this:

- Connect to Org under test

- Set up Test Data & Variables (Optionally)

- Perform CRUD operations in API and/or UI

- Validate/Assert field values in API and/or UI

- Perform data cleanup

Inputs

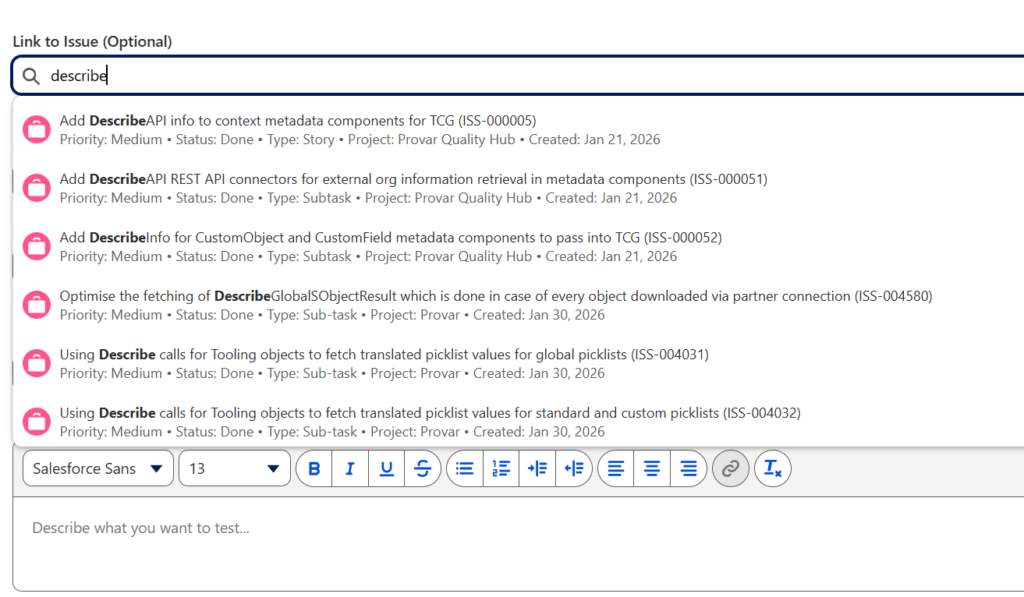

As with anything with AI, the more context and information you can provide, the better the results generally are. It is recommended to provide all available inputs to the generator, but the only required fields are Test Intent Name and Scenario Description.

The complete list of available inputs to Provar’s test case generation is as follows:

- Linked Issues (imported or created manually in Quality Hub)

- Issue description, acceptance criteria, summary, and more are forwarded to the generator for context.

- Custom Lookup implementation which returns additional info used to easily distinguish records.

- Select Saved Intent (Optional)

- Used to reference existing Test Intents that can be utilized for repeated test case generation attempts. Will auto-populate all fields previously saved to the Test Intent, including all fields except for Context Files:

- Test Intent Name

- Test Scenario Description

- Test Persona

- System Under Test

- Test Project

- Metadata Components (might take a moment to load if your org has imported over 1000 components)

- Related Test Cases

- Issues

- Custom Lookup implementation which returns additional info used to easily distinguish records.

- Used to reference existing Test Intents that can be utilized for repeated test case generation attempts. Will auto-populate all fields previously saved to the Test Intent, including all fields except for Context Files:

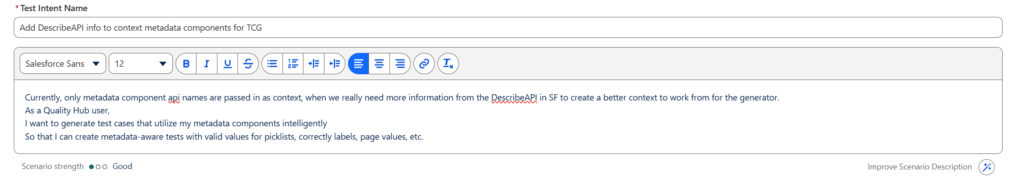

- Test Intent Name*

- The name of the Test Intent to create as part of this generation attempt. This allows users to capture all of their inputs in a record that they can search for at a later date to retry similar generations or test multiple variations of inputs easily.

- This field will be auto-populated by the Linked Issue or Select Saved Intent lookups.

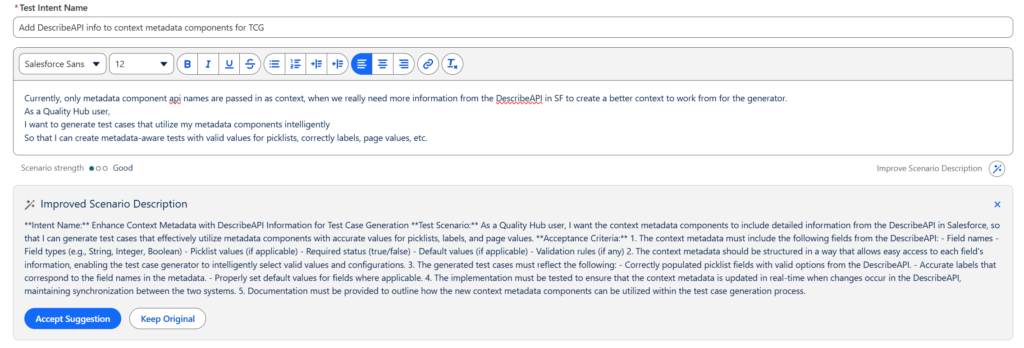

- Test Scenario Description*

- Natural language input for the test case you’d like to generate.

- This field supports full rich text formatting, and links and styling are supported as well.

- This field will be auto-populated by the Linked Issue or Select Saved Intent lookups.

- The Scenario strength indicator is provided to guide the user towards providing more context. Key language such as “Navigate”, “Click”, “Type”, “Assert”, etc. are all good strength indicator improvements to make in your description.

- If you have your own OpenAI API Key configured in the Provar Quality Hub > Settings > Provar Quality Hub AI tab, then you can also optionally utilize the “Improve Scenario Description” button. You’ll also need to open up the Remote Site Setting for OpenAI API endpoints in the Provar Quality Hub > Settings > Connections tab.

- Test Persona (Optional)

- Sets the context for the user who is executing the test scenario. This helps to ground the generator in how to test and what role to assume.

- System Under Test (Optional)

- Used to pull in related Metadata Components for context-aware generations.

- Test Project (Optional)

- Allows users to save generated test cases to a specific Test Project, and in future iterations will also provide additional context around other tests included in the project.

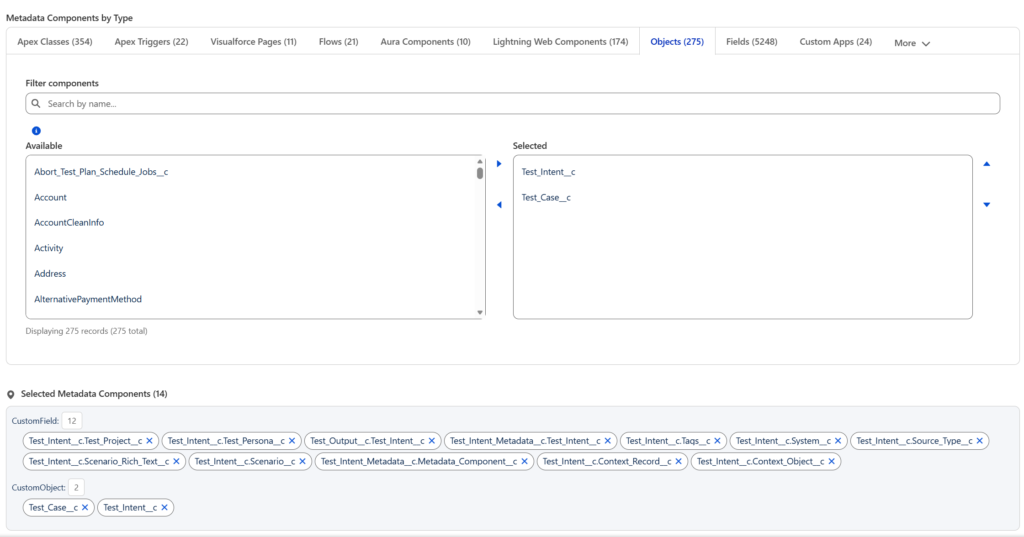

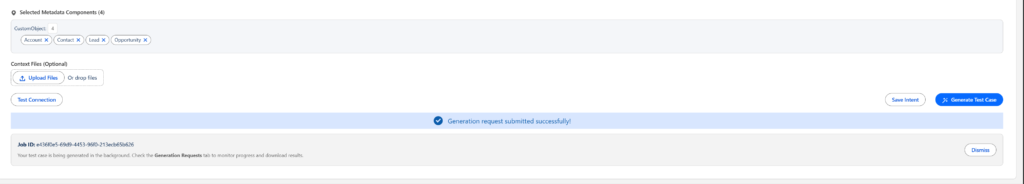

- Select Metadata Components

- Will load once you have set a System Under Test and imported Metadata Components to it.

- Users can select the Metadata Type tab, then search for their Metadata Component to add to the context for the Test Intent.

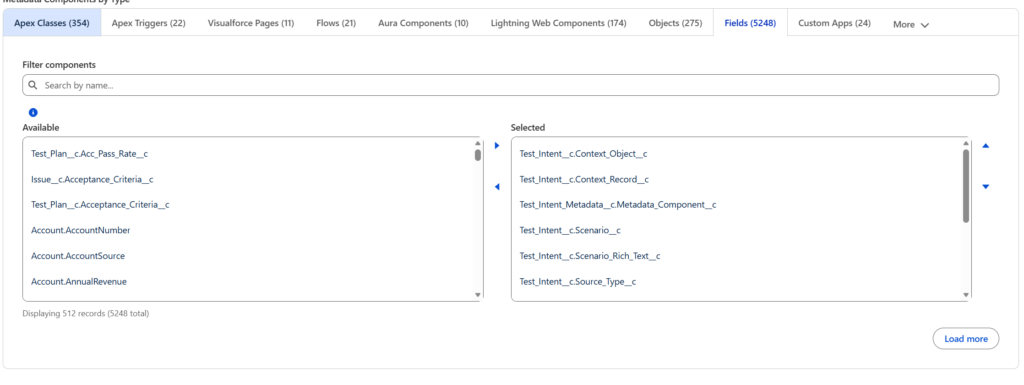

- Metadata Types with high volumes, such as Fields, will only display 500 records by default, but all records can be search for. The Load More option is there to support pagination and prevent Salesforce Governance Limits.

Metadata Types with Selected Metadata Components displayed

Fields Metadata Type with Load More option

- Context Files (Optional)_

- Files can be attached for context, similarly to how other AI tools work.

- General recommendation for file attachments is to keep them focused on the tasks at hand. These files will be parsed and extracted for the core content to send to the generator, and can help to provide more general guidance for your test cases or test descriptions.

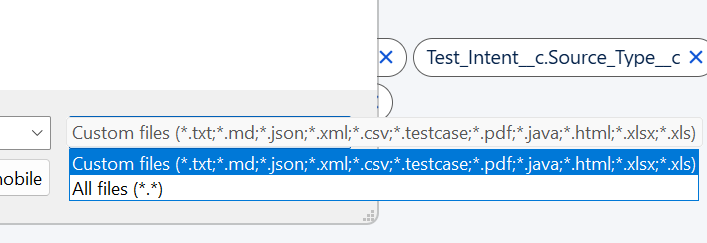

- Supported file types for now are:

- .html, .txt, .csv, .xml .pdf, .testcase, .json, .md, .java, .xls, and .xlsx

- Related Test Cases (Optional)

- Users can search for and provide test cases already present in Quality Hub to add additional context for reference. This can help the generator to be more accurate by providing test cases with similar patterns, objects, apps, or pages.

Once all desired inputs have been set, users can either click Save Intent or Generate Test Case. Save Intent will simply save all of your inputs so you can reuse them again by referencing this Test Intent by name.

Generate Test Case will save the Test Intent and then perform the generation automatically.

Connection Test is a simple health check to ensure connectivity to the AWS API Endpoints and stability of the APIs.

Describe API Usage

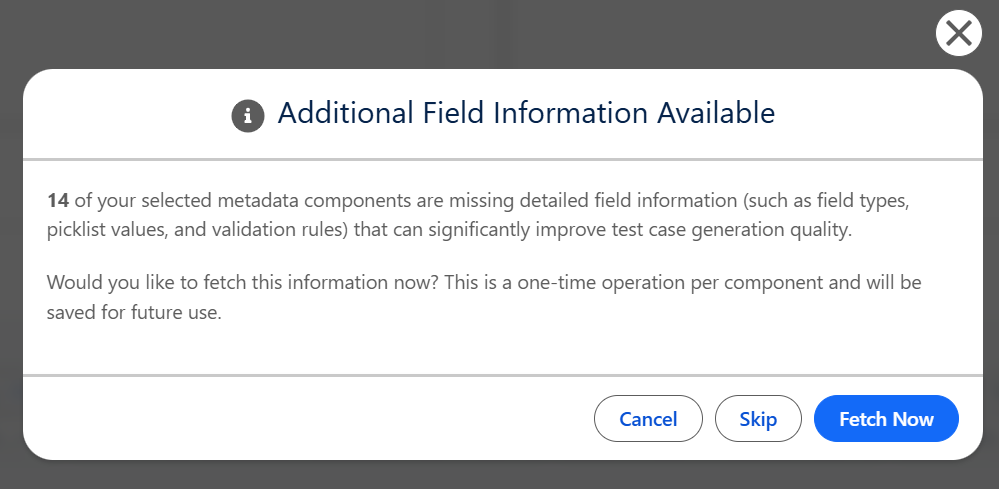

- Once you click Generate Test Case, you may see this pop-up window here:

- This is a dialog that will allow you to asynchronously fetch additional info using the Describe API from Salesforce for all of your selected Objects & Fields. This helps to reduce hallucinations in generated test cases for picklist values, data types, and more.

- Clicking Skip will not prevent the test case from being generated.

- Clicking Fetch Now performs the asynchronous operation of retrieving additional information on your Objects & Fields and saving that information to the Metadata Components for later usage.

- Note: If the objects/fields being passed in as context are part of a managed package, then the Describe API may not be able to successfully retrieve additional information. This will result in this window appearing each time the user clicks Generate Test Case with those components attached as context.

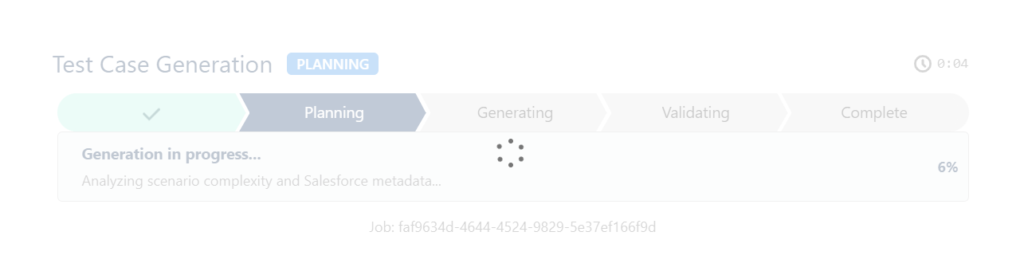

Test Generation Phases

Users will get updated timing estimations and feedback as the generation takes place. Please note that most test case generations will take around 2-4 minutes to complete in full.

To improve quality, reliability, and overall performance for the generation process, we have broken it into a multi-phase process and made it asynchronous in 3.25.0.

3.24.0 UI (Synchronous processing):

Phase 0: Queued

- Nothing of note in this phase, just an internal check that validates all user inputs were properly sent and received by the generator.

Phase 1: Sizing & Planning

- The sizing checks the inputs for complexity of the test case to ensure the proper model and token size is used, and usually takes less than a few seconds.

- The planning phase analyzes the scenario and inputs in full to create a rough outline for the test before it begins the automation generation. This helps to break the process into pieces for the agent to focus on its individual piece of the pie. The planning phase typically takes anywhere from 15-30 seconds.

Phase 2: Generating

- The generating phase is where the sizing and planning has been passed into the structured test case generation. This performs the bulk of the processing here, and can take anywhere from 90-18 seconds, averaging around 120 seconds typically.

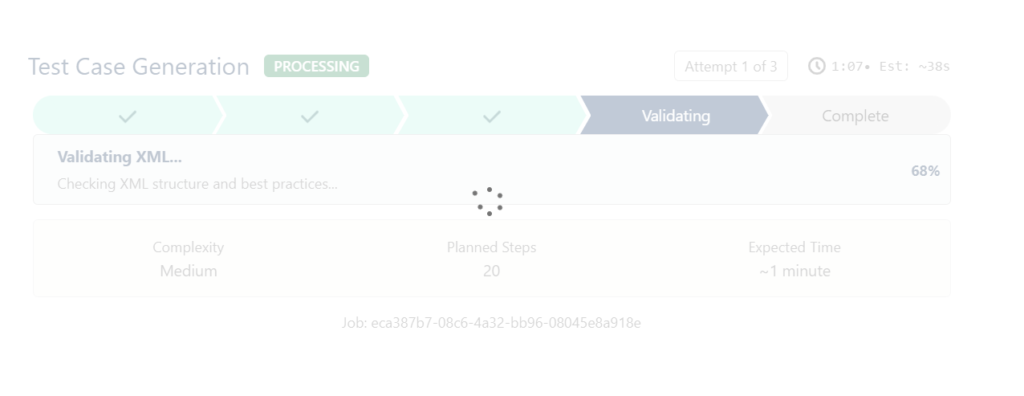

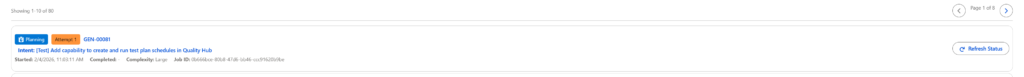

- The status indicator also shows the attempt # / 3, along with the processing time and estimated time remaining.

Phase 3: Validating

Once a test case has been successfully generated and passed schema validation, it will be validated in full by our custom-built Provar Test Case Validator. This ensures proper XML structure, argument usage, and enforces best practices. If the quality score is below a certain threshold or if the validation returns an error, then the generation will undergo a repair process. This means the attempt # will increment to 2, and the validator will feed back all warnings and errors into the repair process for surgical fixes to the test case.

If it is still unsuccessful in either of the above checks after the 2nd attempt, it will undergo a full regeneration attempt with a higher-quality model and token usage, targeting specific errors and warnings from the previous attempts.

Outputs

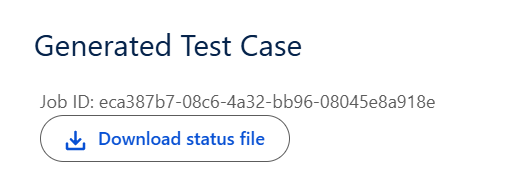

Once a test case has been successfully generated, there are several items output to the page.

- Job ID

- This is primarily for internal usage and is meant to assist with debugging purposes or for connecting with Provar Support on the generation & validation logs.

- Status File

- Users will also have the option to download the full status.json file, which contains the full XML test case, the parsed test case (manual steps), along with other important fields returned by the API such as quality metrics and validation information.

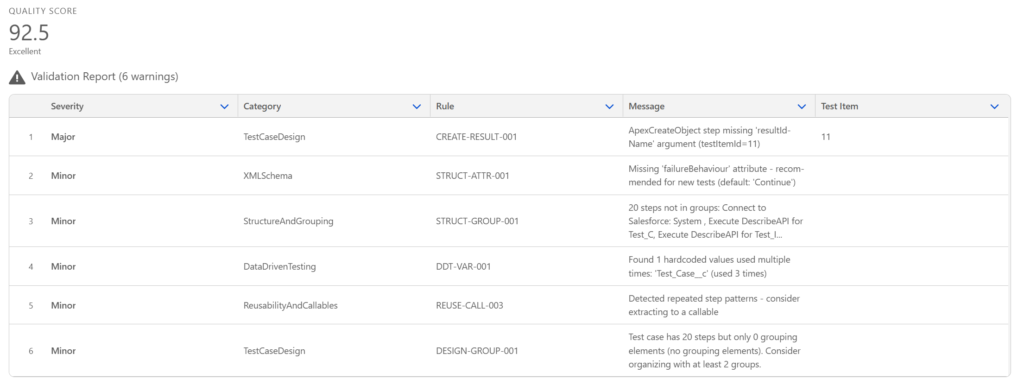

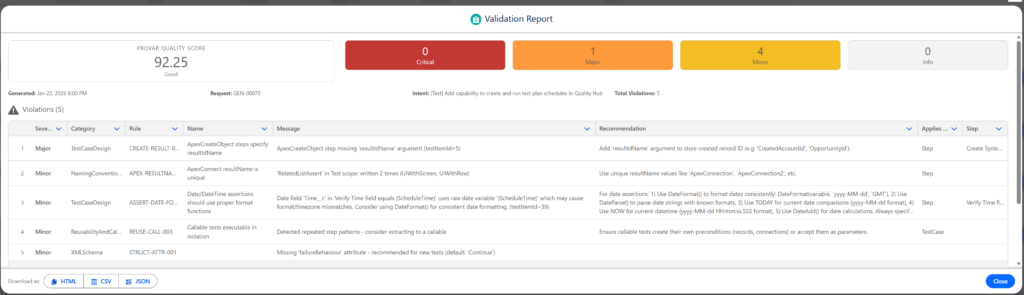

- Quality Score & Validation Report

- Every generated test case returns with a quality score and a full validation report with all of the surfaced warnings.

- We have built a custom Provar Test Case Validator that helps to produce this score and report.

- The default Quality Score Threshold for repairs and regeneration attempts is set to 80 by default. Future versions will allow users to increase this score to improve the overall average quality of test cases returned.

- Future iterations of the Validator will be made available to users, so that they can run it across their test cases and test projects to get a quality score and report client-side.

- Parsed Steps Preview

- Manual test steps parsed by the automated test case for review.

- Users can save these manual test steps to Provar Quality Hub as test cases by clicking Save Test Case at the bottom of the page.

Once saved, the link to the saved test case will appear underneath the Validation Report table.

- Download XML

- This button allows users to download the full test case XML so that they can copy it into their Provar Automation project. Test Cases generated can be copied into the project by drag-and-drop or by copying the file directly into the tests folder of your Provar project.

In 3.25.0, we have made the retrieval of generated artifacts and reports much more seamless, as well as added in a specific report for the Validation Reports generated alongside test cases.

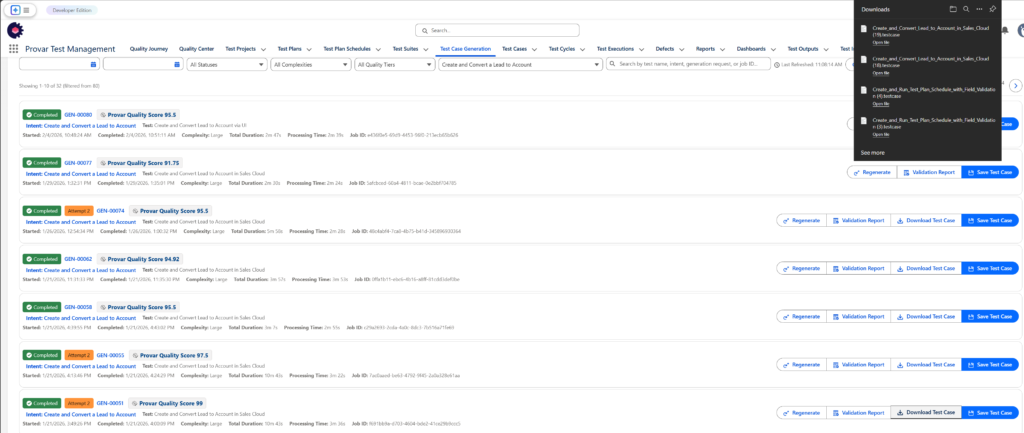

Generation Requests

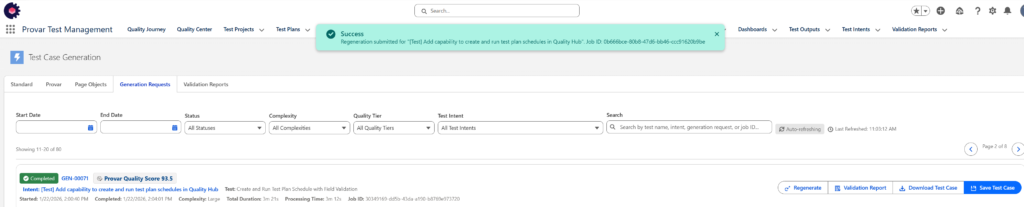

Test Case Generation is asynchronous in 3.25.0+, and as such, the Provar Test Case Generation will now return a simple job ID and redirect the user to the Generation Requests tab to monitor their generations.

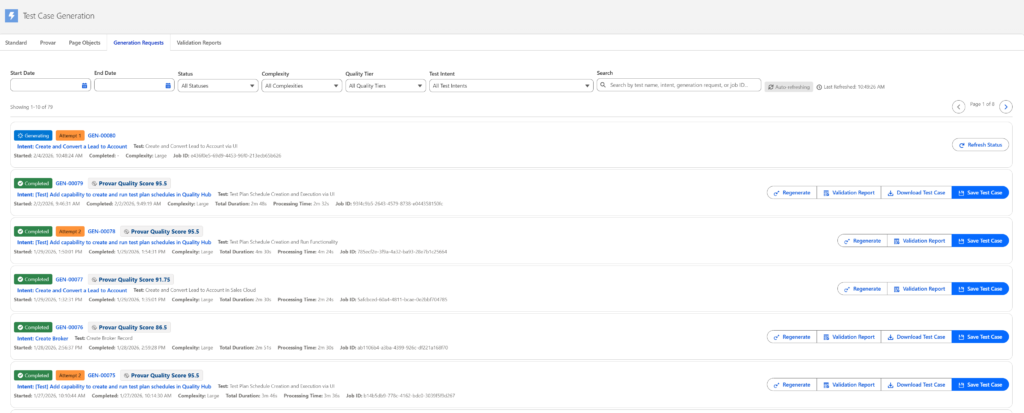

All of the above phases still apply from the synchronous test case generation, but we will be tracking those using a job and updating the status periodically in the Generation Requests tab:

This page supports filtering, searching, and pagination of all Generation Requests. It will automatically refresh for status updates if there are any pending requests. Status updates are retrieved every few seconds to maintain real-time updates from the back-end processing.

Additionally, users can access the Validation Reports, Download Test Cases, and Regenerate Test Cases.

Validation Reports are formatted similarly to other static code analysis tools, and provide the user with the severity, category, rule ID, name, message, recommendation, what the rule applies to (test case level, step, etc.), and the test step it applies to as well if applicable.

These reports are from Validation Report records in the package, and support standard Salesforce reporting & querying.

Additionally, they can be exported to HTML, CSV, or JSON formats for easier consumption in other systems or local usage.

Clicking Download Test Case will download the full *.testcase XML file associated with the generated test, and allow users to copy it into their Provar Automation project directly. See steps below in the section Importing into Provar Automation for more on how to do that.

If the user clicks Regenerate, the same Test Intent will be used to regenerate the test case, except it will also include the validation warnings and errors from the previous generation, which will allow the system to address specific issues in the test case being generated. It will fully reprocess the test case, however, and the overall duration is not impacted.

Note: Saving full test cases directly into Quality Hub from the generated tests will be supported in a future version.

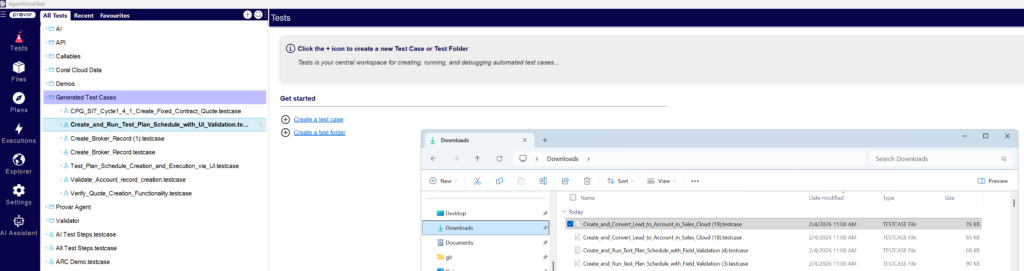

Importing into Provar Automation

Once tests have been generated and downloaded from the Generation Requests tab, they can be copied directly into Provar Automation projects (V2 or V3). Since the generation & validation engine does not take into account V2 & V3 differences, it is best to assume V3 capabilities might be included in the test case, and import them into V3 for guaranteed compatibility.

Launch Provar Automation and open a new or existing project. We strongly recommend placing these test cases into their own dedicated folder, at least initially. This way they can be proper looked over first for validity and tweaked for quality and accuracy.

Copy the file from the Downloads folder and paste it into your dedicated Generated Tests folder, or you can drag-and-drop it like so:

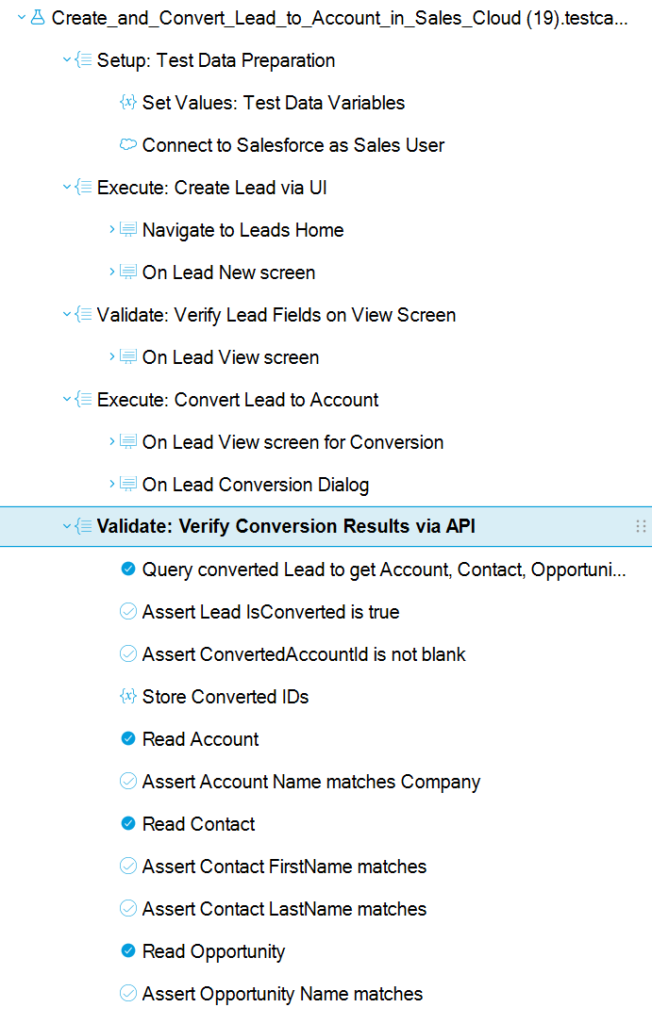

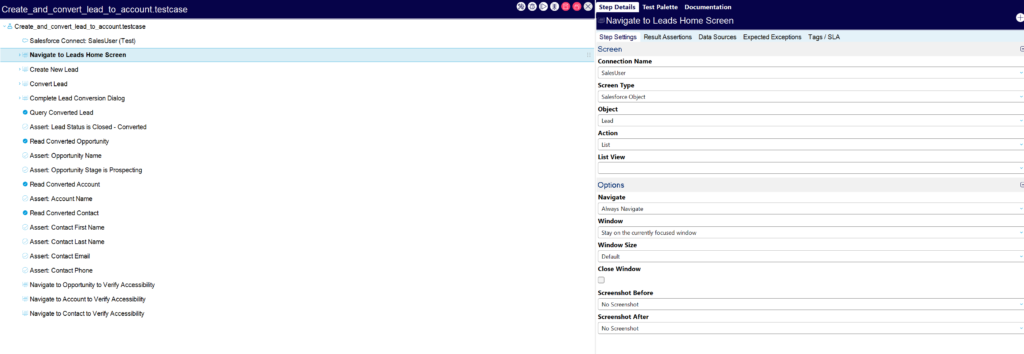

Manually verify the test case loads properly by selecting it and ensuring the steps are created accordingly (example below of a Create and Convert Lead to Account flow in Sales Cloud):

From here, you’ll need to do some manual verifications, but you can see now the power of Intelligent Test Case Generation and its limitless possibilities with Provar!

Debugging & tweaking test issues

All generative AI should be subject to review before accepted blanketly, this goes the same for any test cases generated by Provar’s Intelligent Test Case Generation feature.

Once you have downloaded and copied the generated test case into Provar Automation, there are some typical spot checks you’ll want to verify.

Validation Report Table

First and foremost, take note of the Validation Report Table post-generation so you remediate any Major/Minor warnings in the generated test case. Test Item IDs will be provided if applicable and correspond to the test step number in the generated test case.

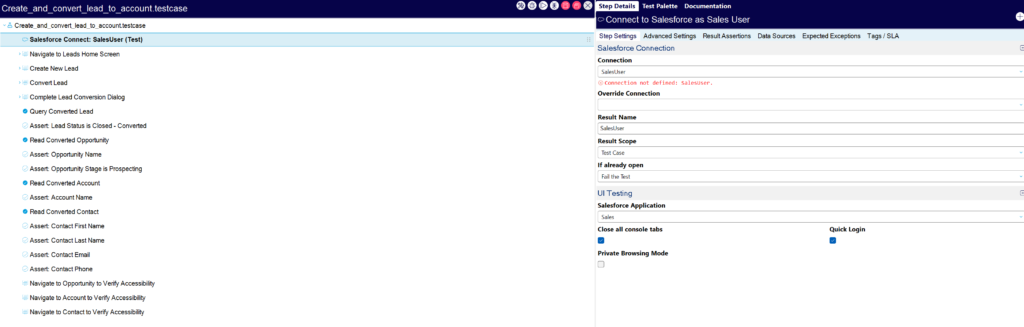

Salesforce (Apex) Connect steps

Because all Provar connections internally reference what’s known as a connectionId, it can be assumed that every Salesforce Connect step will need to be properly configured to point to your target connection as created in Provar Automation.

Resolve this error by clicking the Connection picklist and selecting your relevant Salesforce Connection.

Locators

Because Page Object Generation is not currently part of Intelligent Test Case Generation (beta), users will need to verify any test cases that interact with UI elements in non-metadata supported fields.

Field Usage in Apex Steps

Always validate all fields being reference in Apex CRUD steps, i.e. Create, Read, Update, and Delete steps. If you pass in context objects & fields then the test case is less likely to use incorrect names for fields.

UI On Screen Steps

All UI On Screen steps, i.e. steps like this one here:

should be properly validated and configured to ensure they are pointing to the correct Screen Type, Object, Action, List View, and Navigation setting.

Note: All test cases generated have it enforced to use Navigate = Always for the first On Screen step, while subsequent steps use Don’t Navigate, unless there is an explicit reason to do so.

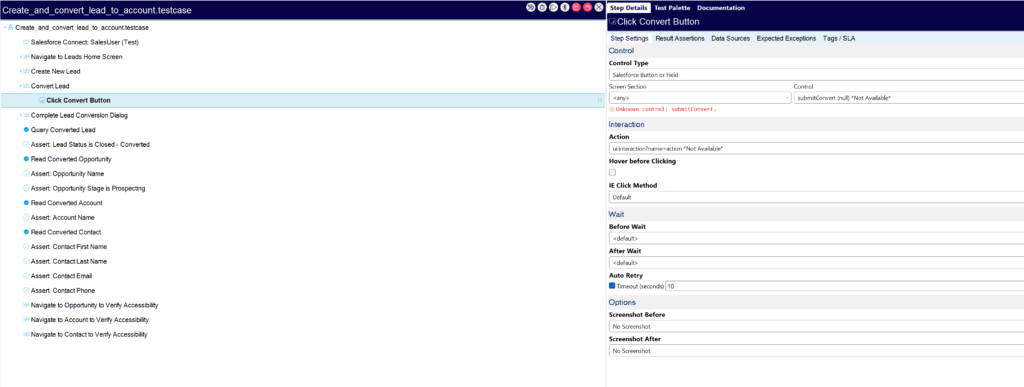

UI Action Steps

UI Action steps, i.e. Click, Set text, Assert, etc. should be spot-checked for accuracy once generated. Occasionally, steps generated could be using incorrect Screen Sections or Control picklist values. These are multi-argument fields in Provar’s test case XML structure and require layers of validation to get correct, so it is best that each generated test case is reviewed for accuracy.

It’s more likely that the Control picklist is not accurate than the Screen Section, and multiple errors can be resolved by correcting the Control picklist first, then simply refreshing the test case or clicking into each step separately so it reloads.

Validation Failures in Provar Automation UI

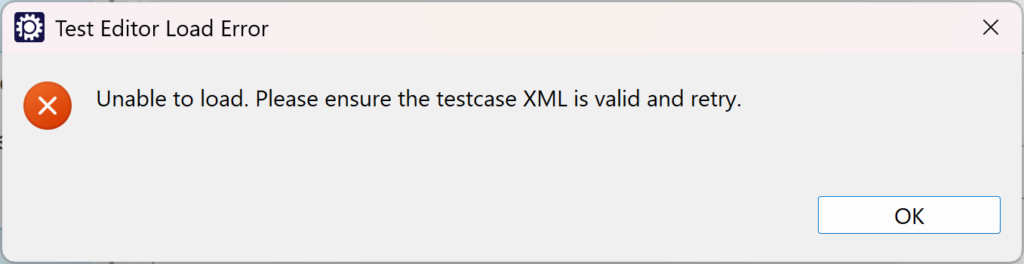

Occasionally, test cases generated may result in failures to load properly at all in Provar Automation.

Below is an example of what you might see in Provar when generating a test case and copying it into Provar Automation:

We are working on a daily basis to improve our validation process to ensure this does not happen, however, there are still edge cases that may exist.

In most cases, the best solution is to regenerate the test case, adding in whatever feedback you can into the Scenario Description to improve the quality.

- Home

- How to Use Quality Hub

- AI in Quality Hub

- Actionable Insights

- Quality Hub Setup

- Quality Hub Setup and User Guide

- Installing/Updating Quality Hub

- Configuring Quality Hub

- Setting Up a Connection to Quality Hub

- How to Know if a File in Automation is Linked in Quality Hub

- Uploading Existing Manual Test Cases to Quality Hub with DataLoader.io

- Object Mapping Between Provar Automation and Quality Hub

- Quality Hub Filters

- Metadata Coverage with Quality Hub

- Quality Hub Integrations

- Plugins

- Release Management

- Test Management

- Test Operations

- Release Notes